Summary

AI is currently 2x better at exploitation than detection, and AI-powered exploits cost just US$1.22 per contract — falling another 22% every two months. Attackers scale faster than defenders unless platforms commit serious AI investment now.

AI-enabled scams are 4.5x more profitable than traditional ones, and impersonation tactics surged 1,400% year-on-year in 2025. Crypto bears 88% of all detected deepfake fraud cases globally.

75% of financial institutions plan to further increase AI spending on financial crime detection. JPMorgan's AI systems prevented an estimated US$1.5B in fraud losses, and Binance's enhanced detection blocked US$10.53B from 2025 to Q1 2026.

Tether has frozen over US$4.4B to date, and the T3 Financial Crime Unit froze US$300M in its first year alone. Combined with exchange-level AI detection, this layered model is closing recovery gaps that pure TradFi rails cannot match.

The Industrialization of AI-Powered Threats

The financial crime landscape has entered a new phase. Nasdaq Verafin estimates global illicit financial activity has surged by US$1.3 trillion since 2023, reaching US$4.4 trillion in 2025 — a 19.2% compound annual growth rate driven in large part by criminal networks weaponizing AI to industrialize scam playbooks. Crypto sits squarely in the path of this shift.

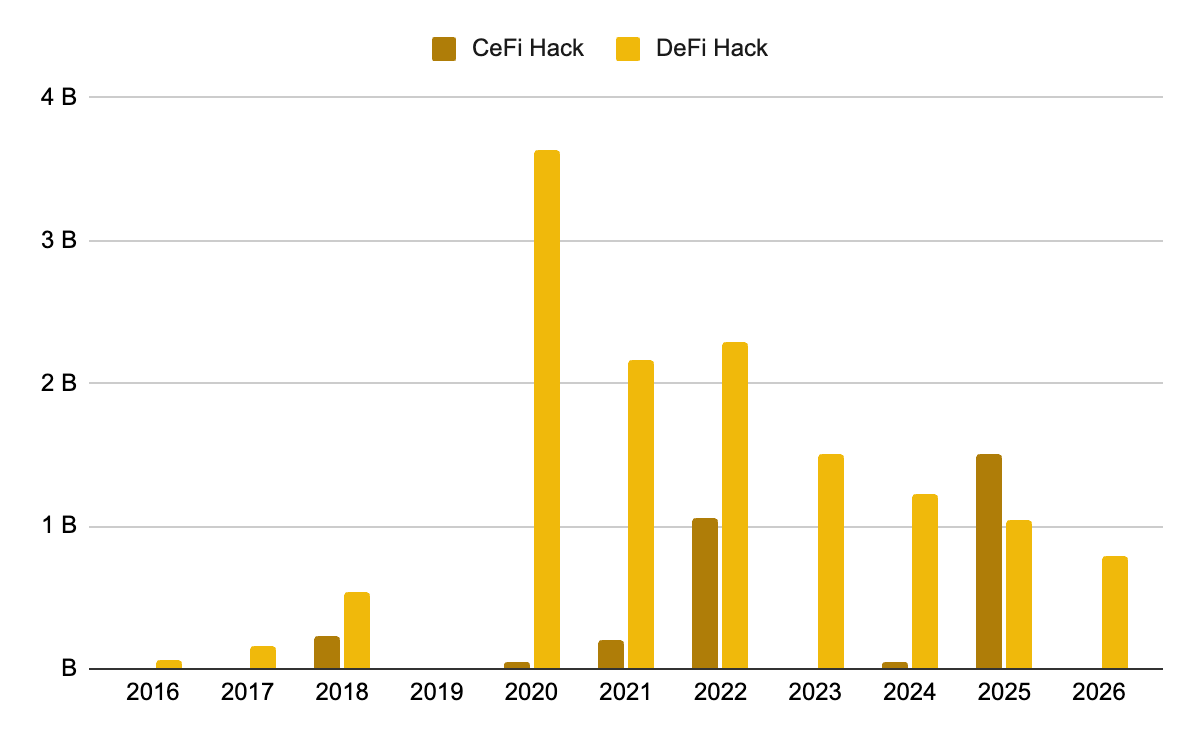

Over the longer run, DeFi hacks have steadily declined from US$3.6B in 2020 to US$1B in 2025, and zero major CEX-targeted exploits succeeded so far in 2026. But monthly DeFi hacks surged to US$621M in April 2026 — the highest since March 2022 — showing the threat is not neutralized, just shifting in shape. 66% of these exploits stemmed from compromised access controls, with social engineering and DNS attacks as the dominant tactics.

Figure 1: Targeted exploitation in DeFi and CeFi has steadily declined

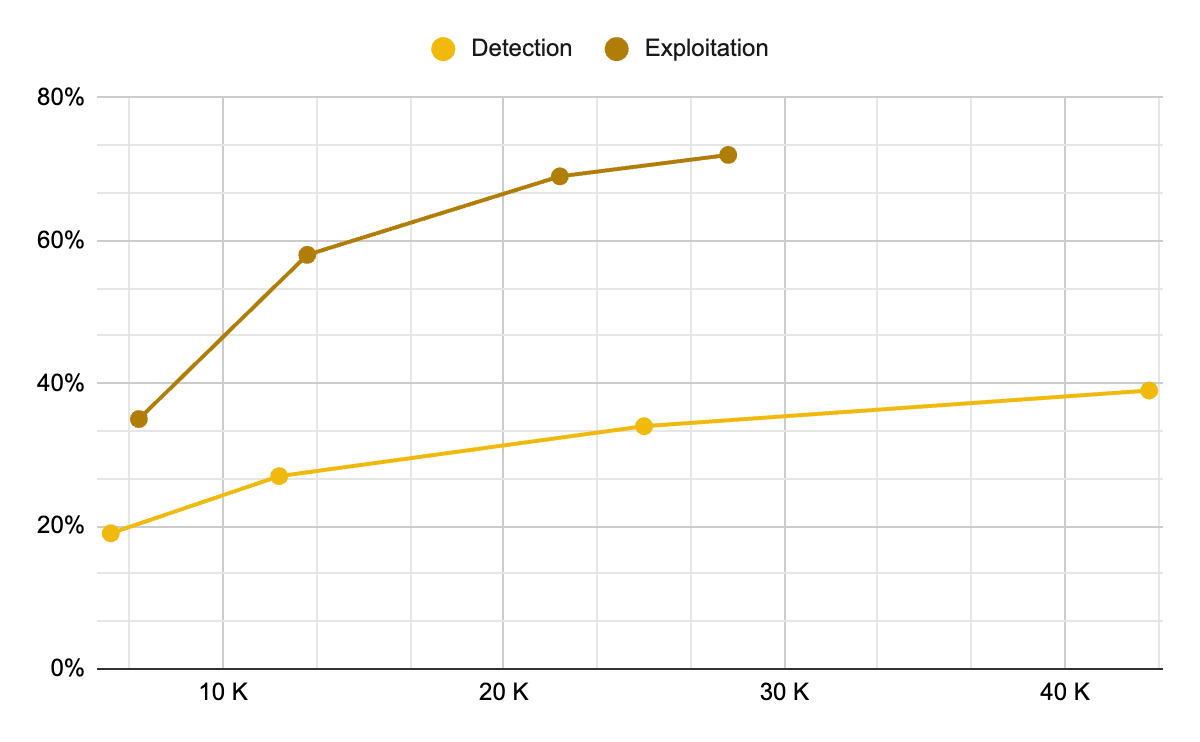

The economics now favor attackers. AI-powered exploits cost roughly US$1.22 per contract on average, and that cost is projected to fall by an additional 22% every two months — collapsing the barrier to large-scale automated scanning. GPT-5.3-Codex achieves a 72.2% success rate in "exploit" mode on EVMbench, while the "detect" success rate runs at only half that. Hacken's SSDLC Maturity Survey shows over 80% of developers now use AI in development, but fewer than 40% use AI for advanced testing — leaving the offense-defense gap structurally lopsided.

Figure 2: Models Score Higher in Exploitation than Detection on EVMbenc

Scams have grown in parallel. Chainalysis projected crypto scams to reach US$17B in 2025, a 30% year-over-year increase. AI-enabled scams extract 4.5x more money per scam and generate 9x more transaction activity, powering deepfakes, phishing bots, fake platforms, voice cloning, and impersonation across chat applications. Impersonation tactics alone surged by a staggering 1,400% year-on-year. About 60% of industry respondents cite increased AI use by criminals as the main driver of risk exposure in 2025. Crypto bears a disproportionate share of this burden — the sector now accounts for 88% of all detected deepfake fraud cases globally, and deepfake-related losses in North America alone exceeded US$410M in the first half of 2025. Deloitte projects gen-AI-enabled fraud across financial services could reach US$40B annually by 2027.

AI-Powered Risk Management

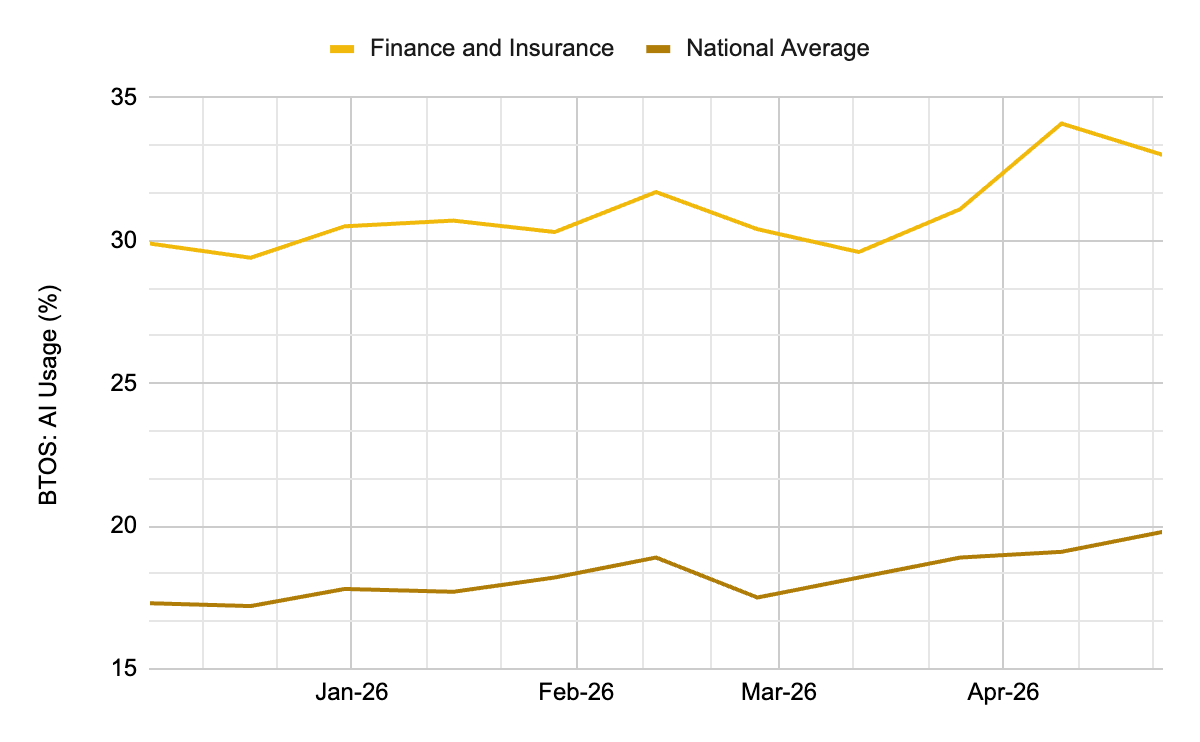

Whether we welcome it or not, AI is currently 2x better at exploitation than at detection. Platforms have no choice but to be both proactive and reactive — and the spending reflects that reality. Global financial services AI spending reached approximately US$58B in 2025 and is on track for US$97B by 2027. The financial industry needs AI more urgently than many sectors because it operates at high speed, high scale, under constant attack, and under strict regulatory pressure — making real-time intelligence both a competitive and security necessity. The US Census Bureau places AI usage in Finance and Insurance at 33%, well above the 20% national average.

Figure 3: The financial sector outpaces the average sector in AI adoptio

According to Nasdaq Verafin's 2026 Global Financial Crime Report, 75% of financial institutions plan to increase their AI use for financial crime detection, with the world's largest banks committing to a further 20% increase in AI investment over the next year. The TradFi numbers show what's already possible at scale: JPMorgan Chase's AI-powered fraud detection systems have prevented an estimated US$1.5B in losses by identifying and blocking suspicious activity in real time.

Crypto exchanges are following the same trajectory. Binance has set up 24+ AI initiatives across compliance, with 100+ AI models powering anti-fraud controls — reducing illicit fund exposure by 96%. Its custom-built risk and fraud detection tool, Strategy Factory, combines business-aware optimization, modular rule construction, and continuous refinement to deliver precise, adaptable risk detection that better protects users.

In many fraudulent cases, multi-dimensional detection (anomalies of device, fund flow, content, behavior, intent, etc.) is needed to efficiently identify scams. In P2P scenarios, fund flows alone may look perfectly normal in isolation — it's only by combining dialogue signals with behavioral data that the fraud becomes visible.

Platforms can't stop hackers from phishing users directly, but they can minimize exposure: multi-factor authentication, biometric passkeys, anti-phishing codes, and AI-based behavioral monitoring for rapid login attempts from unusual locations, repeated failed attempts, and large payments that deviate from typical user behavior. Additional machine learning-powered protection layers apply at the withdrawal stage to halt outbound transfers when behavior turns abnormal.

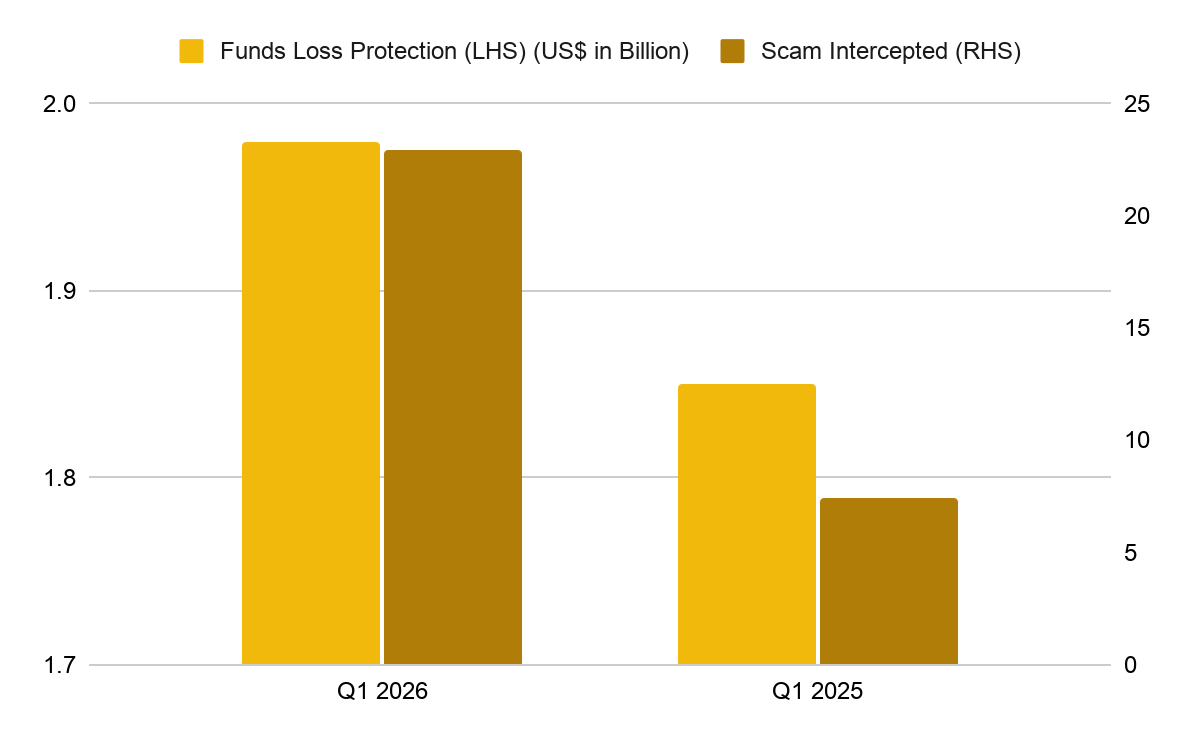

Exchanges can go a step further by proactively simulating phishing settings or identifying phishing/scamming signals. Binance's simulation technique reduced the phishing rate from 3.2% to 0.4% — an 8x improvement. In fiscal year 2025 (ending November 2025), Binance's enhanced detection systems blocked US$6.69B in fraud and scam attempts across 5.4 million users, blacklisted 36,000 addresses, and issued over 9,600 real-time pop-up warnings daily. In Q1 2026, 22.9 million scam and phishing attempts were intercepted — up 54% quarter-on-quarter and 209% year-on-year — safeguarding US$1.98B in user funds. While this represents a 7% year-on-year increase in funds protected, it marks a 30% quarter-on-quarter decline, reflecting seasonal dynamics: vacation periods and elevated consumer spending create favorable conditions for scammers. Cumulatively, US$10.53B in user losses were prevented from 2025 through Q1 2026.

Figure 4: Binance protected a greater amount of user funds and intercepted more scam attempts in Q1 202

Stablecoin Issuers as an Active Defense Layer

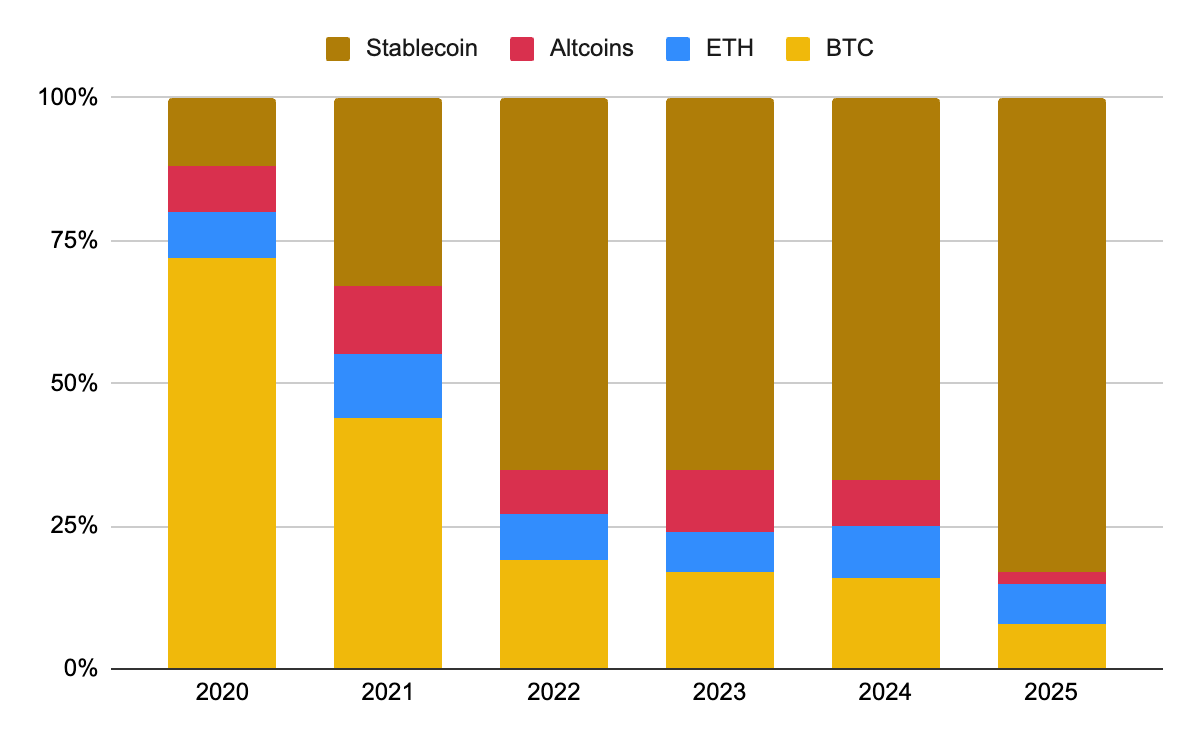

Beyond exchanges, stablecoin issuers themselves now play a direct enforcement role. Stablecoins have come to dominate illicit transactions — accounting for 84% of all illicit on-chain volume — but they also offer a unique advantage that traditional rails do not: issuers can freeze tainted funds at the protocol level.

Figure 5: Stablecoin use in illicit activities has risen over time

The two market leaders take notably different approaches. USDT has blacklisted nearly 18x more funds than USDC on ERC-20 alone, with the gap widening further when TRC-20 is included. Tether takes a proactive view, working directly with law enforcement and blockchain intelligence firms — freezing more than US$4.4B to date as of April 2026. Circle, by contrast, generally responds only to judicial orders and regulatory sanctions.

Public-private collaboration is amplifying this enforcement edge. The T3 Financial Crime Unit — a joint initiative between Tether, TRON, and TRM Labs launched in late 2024 — has frozen more than US$300M in criminal assets globally in its first year of operation, including US$19M tied to the Bybit hack alone. Binance was the first exchange to join its T3+ Global Collaborator Program, with early wins including US$6M frozen from a pig-butchering scam. This is the model emerging across the industry: AI-powered detection at the exchange layer, intelligence sharing across the network, and freeze-capable enforcement at the asset-issuer layer.

Convergence with TradFi: Card Fraud and KYC

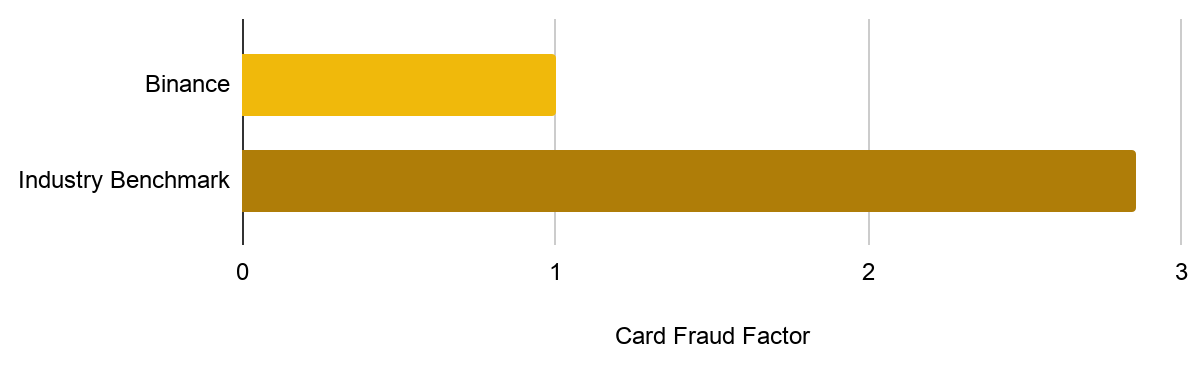

As TradFi and CeFi converge, exchanges are increasingly offering fiat card services — bringing them into direct contact with the same fraud patterns banks have spent decades fighting. AI's advantage in card fraud is speed: it reacts in milliseconds and learns from new fraud patterns continuously, complementing rules-based systems. The TradFi benchmark is instructive — Mastercard's gen-AI-enhanced Decision Intelligence platform reduced false positives in detecting compromised cards by up to 200% and accelerated identification of at-risk merchants by 300%.

Binance's in-house AI model adoption for fiat card risk decisions rose from 41% in Q4 2025 to 57% in Q1 2026. The result: Binance's fraud rate is roughly 60–70% lower than industry competitors.

Figure 6: Binance’s card fraud rate is 1:2.86 relative to the estimated industry benchmark

KYC has emerged as the heaviest-hit domain. Around 80% of attacks against Binance involve a certain level of KYC fraud, consistent with the broader industry pattern. Attack methods have evolved rapidly — from physical masks, to static photo spoofing, to deepfake video and synthetic face swaps. Binance's KYC Face Attack and Liveness Detection models continuously evolve to counter each new generation. Beyond defense, AI in KYC processing has delivered a 100x increase in throughput efficiency of operations.

Empathy still matters. Believing that human conversation cuts through panic better than text, Binance has expanded human support for at-risk users — driving a 20% YoY increase in in-app voice calls, with over 36,000 calls to potential victims in 2025. Even after scams occurred, Binance recovered over US$12.8M across 48,000 cases in 2025, up nearly 41% year-on-year.

Prioritizing Responsible AI

As AI tools proliferate across platforms to boost productivity and convenience, they create new potential entry points for bad actors. Safety must be built into system architecture from the outset.

Binance Ai Pro illustrates this principle: an agentic tool that lets users conduct market analysis, execute transactions, and deploy automated strategies. Funds managed by the AI agent are segregated from the main account, the agent is granted only limited trading permissions (no withdrawals), and users retain flexibility to toggle access — a conservative user can grant spot-only access while forbidding leverage, futures, or borrowing. The agent operates through a defined skill hub. Behind the scenes, agent skills are screened before installation, because malicious skill packages are increasingly common: security researchers at Koi Security identified 341 malicious entries out of 2,857 skills available on OpenClaw's ClawHub marketplace — roughly 12% of the entire registry.

Looking Forward

AI-powered hacking parallels the rise of internet-based threats in the early days of the web. That era taught the industry hard lessons that apply directly here: defense must be layered, threat intelligence must be shared across institutions, and security must be built into systems by default rather than bolted on afterward. The scale of AI-enabled crime is unprecedented, but so is the response — global financial services are committing record AI investment, crypto exchanges are matching TradFi rigor, and public-private partnerships are demonstrating that even pseudonymous on-chain crime can be traced, frozen, and recovered.

The arms race will not end. But history has shown that determined coordination across industry, law enforcement, and technology providers can bring even rapidly evolving threats under control. The next twelve to twenty-four months will be defined by how quickly that coordination scales.

GENERAL DISCLOSURE: This material is prepared by Binance Research and is not intended to be relied upon as a forecast or investment advice, and is not a recommendation, offer or solicitation to buy or sell any securities, cryptocurrencies or to adopt any investment strategy. The use of terminology and the views expressed are intended to promote understanding and the responsible development of the sector and should not be interpreted as definitive legal views or those of Binance. The opinions expressed are as of the date shown above and are the opinions of the writer, they may change as subsequent conditions vary. The information and opinions contained in this material are derived from proprietary and non-proprietary sources deemed by Binance Research to be reliable, are not necessarily all-inclusive and are not guaranteed as to accuracy. As such, no warranty of accuracy or reliability is given and no responsibility arising in any other way for errors and omissions (including responsibility to any person by reason of negligence) is accepted by Binance. This material may contain ’forward looking’ information that is not purely historical in nature. Such information may include, among other things, projections and forecasts. There is no guarantee that any forecasts made will come to pass. Reliance upon information in this material is at the sole discretion of the reader. This material is intended for information purposes only and does not constitute investment advice or an offer or solicitation to purchase or sell in any securities, cryptocurrencies or any investment strategy nor shall any securities or cryptocurrency be offered or sold to any person in any jurisdiction in which an offer, solicitation, purchase or sale would be unlawful under the laws of such jurisdiction. Investment involves risks. For more information, see our Terms of Use and Risk Warning.