Original title: Turbine: Block Propagation on Solana

Original author: Ryan Chern

Original translation: Sharon, BlockBeats

About the author: Ryan Chern, the author of this article, was an engineering intern at Solana and a co-founder of Quantum Labs. He currently works at Blackhawk Research, a cryptocurrency research center.

Data availability is critical to blockchain, ensuring that nodes can easily access all necessary information for verification, thereby maintaining the integrity and security of the network. However, ensuring data availability while maintaining a high level of performance is a major challenge, especially as the network scales.

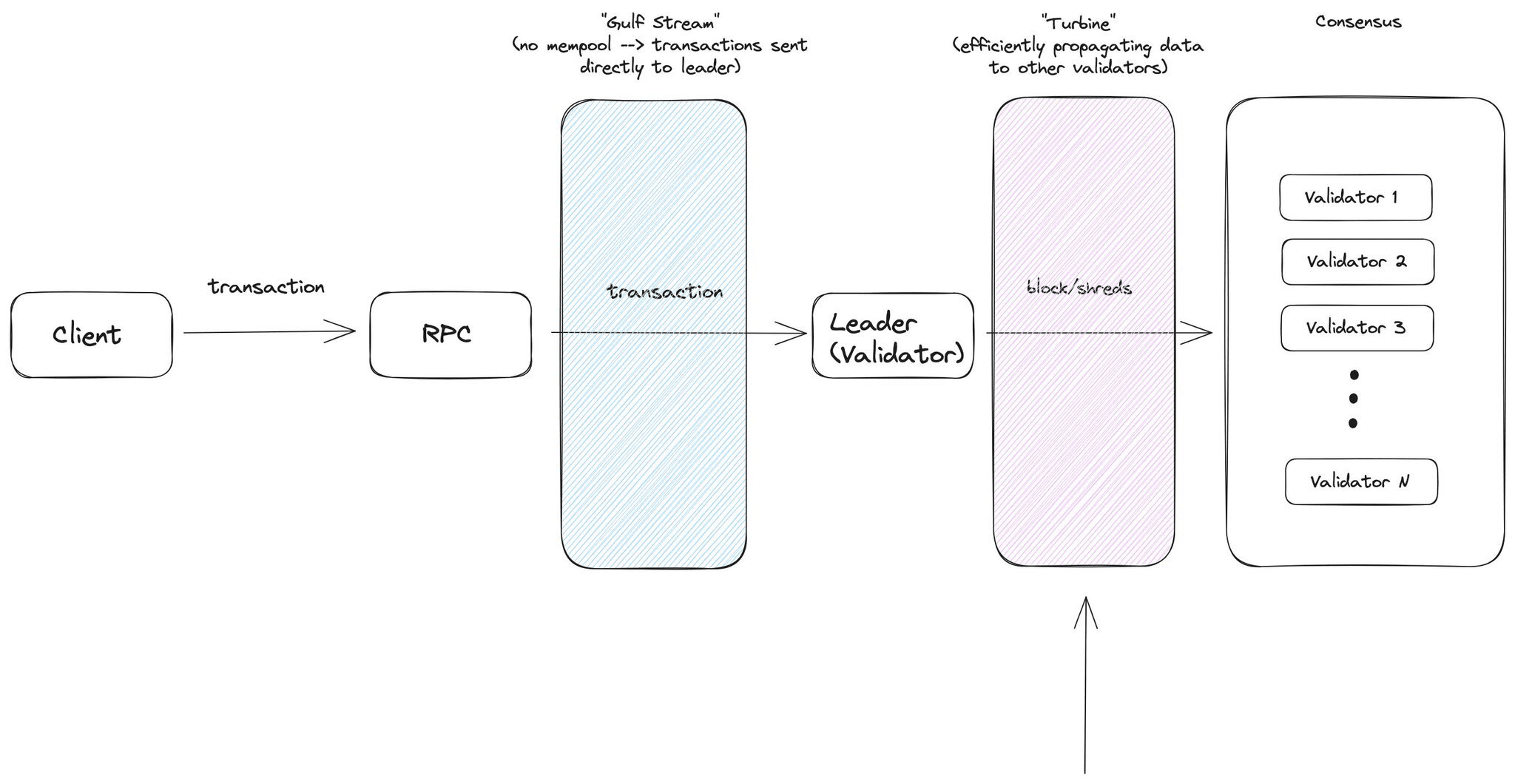

Solana meets this challenge through a unique architectural design that facilitates the consistent creation and propagation of blocks. This is achieved through several key innovations such as leader election, Gulf Stream (which eliminates the need for a mempool), and Turbine (the block propagation mechanism).

Solana's continuity requires an efficient system to ensure that all validators receive the latest state in a timely manner. A simple approach is to have the leader transmit all blocks directly to every other validator. However, given Solana's high throughput, this approach would significantly increase bandwidth and other resource requirements while undermining decentralization.

Bandwidth is a scarce resource, and Turbine is Solana’s clever solution for optimizing the propagation of information from the leader of a given block to the rest of the network. Turbine is specifically designed to reduce the pressure of egress (sending data) from leaders to the network.

In this article, we’ll take a deep dive into how Turbine works and its critical role in Solana’s broader area of transaction inclusion. We’ll also compare Turbine to other data availability solutions and discuss open research avenues in this area.

What is Turbine?

Turbine is the multi-layer block propagation mechanism used by the Solana cluster to broadcast ledger entries to all nodes. The core ideas behind Turbine have been discussed in academia for many years, as evidenced by this 2004 paper and more recent work.

Unlike traditional blockchains, which send data to all nodes sequentially or in a flooding fashion, Turbine takes a more structured approach to minimize communication overhead and reduce the load on individual nodes. At a high level, Turbine breaks a block into smaller chunks and propagates these chunks through a hierarchy of nodes. Here, a single node does not need to contact all other nodes, but only needs to communicate with a select few nodes.

This becomes increasingly important as the network scales, as traditional propagation methods become unsustainable due to the sheer volume of necessary communication. Therefore, Turbine ensures that data is propagated quickly and efficiently across Solana. The speed of block propagation and validation is critical to maintaining Solana’s high throughput and network security.

Additionally, Turbine solves the data availability problem, ensuring that all nodes have access to the data they need to validate transactions efficiently. This is done without requiring large amounts of bandwidth, which is a common bottleneck in other blockchain networks.

Turbine greatly improves Solana’s ability to handle high transaction volumes while maintaining a lean and efficient network structure by alleviating bandwidth bottlenecks and ensuring fast block propagation. This innovative protocol is one of the cornerstones of Solana’s promise of a fast, secure, and scalable network.

Now, let’s take a deeper look at the mechanics of Turbine and how it propagates blocks on the Solana network.

How does Turbine propagate blocks?

Before a block is propagated (i.e. transmitted to other validators in the network), the leader builds and orders the block based on the incoming transaction stream. Once the block is built, it can be sent to the rest of the network via Turbine. This process is called block propagation.

Voting messages are then passed between validators, which are encapsulated in block data to satisfy a commitment state of "confirmed" or "finalized". A confirmed block is a block that has received a supermajority of the ledger votes, while a finalized block is a confirmed block that has more than 31 confirmed blocks built on top of the target block. The differences in commitment states are explained in detail here. This part of consensus will be explored in a later article.

Visualization of where Turbine is in the Solana transaction lifecycle

When a leader builds and proposes an entire block, the actual data is sent as shards (partial blocks) to other validators in the network. A shard is an atomic unit sent between validators.

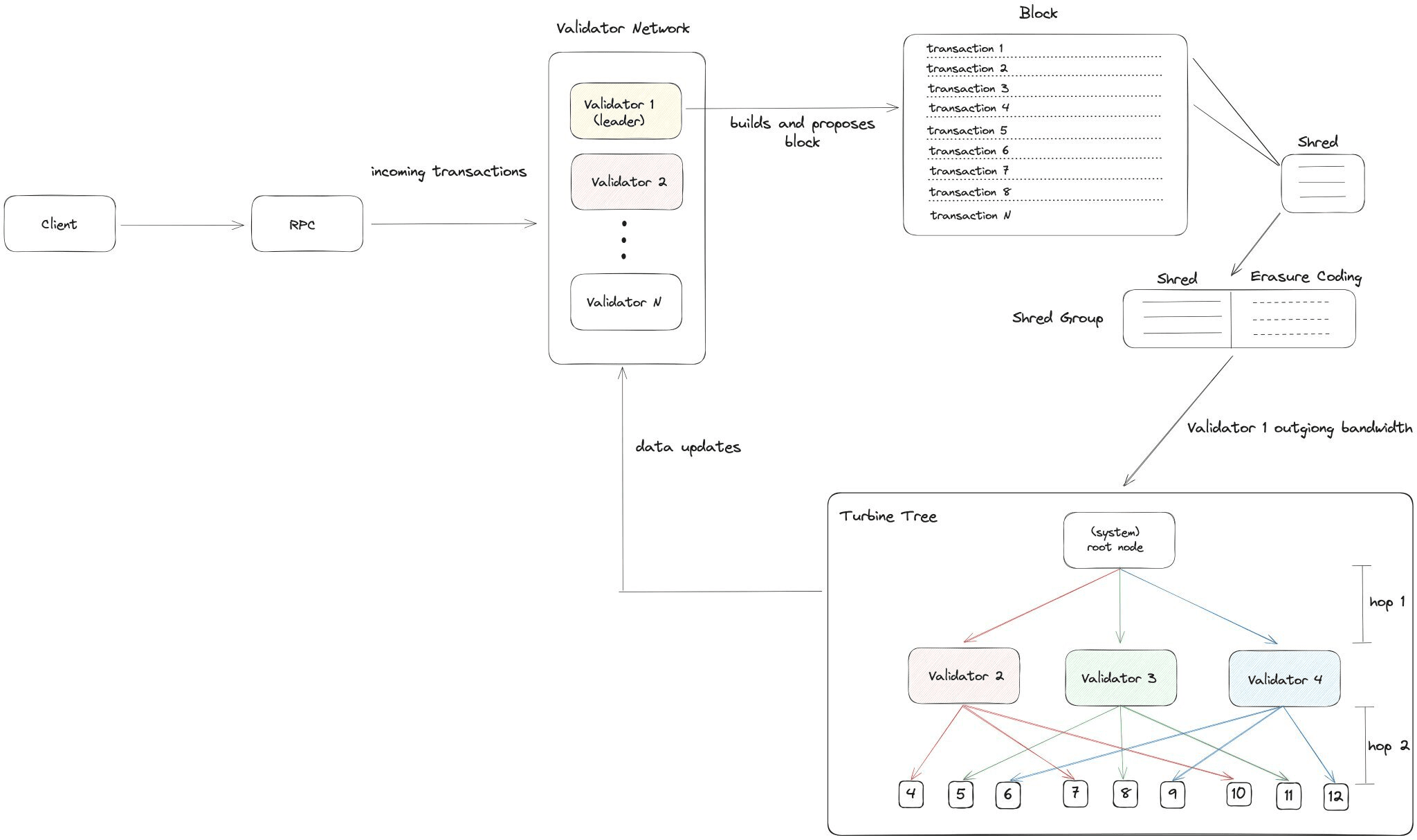

At a high level, Turbine takes shards and sends them to a predetermined set of validators, which then forward the shards to a new set of validators. The following diagram summarizes the sequential process of shard propagation:

As shown in the figure above, although it is called "block propagation", the data is propagated at the fragment level.

In this example, Validator 1 is the designated slot leader. During its slot (a validator is designated as the leader for 4 consecutive slots), Validator 1 builds and proposes a block. Validator 1 first breaks the block into sub-blocks called shards through a process called shredding.

Shredding splits block data into data fragments of the maximum transmission unit (MTU) size (the maximum amount of data that can be sent from one node to the next without breaking it into smaller units) and generates corresponding recovery fragments through Reed-Solomon erasure encoding scheme. This scheme facilitates data recovery and ensures the integrity of data during transmission, which is critical to maintaining the security and reliability of the network.

This breakdown and propagation process ensures that block data is distributed quickly and efficiently across Solana, maintaining high throughput and network security.

Erasure Coding

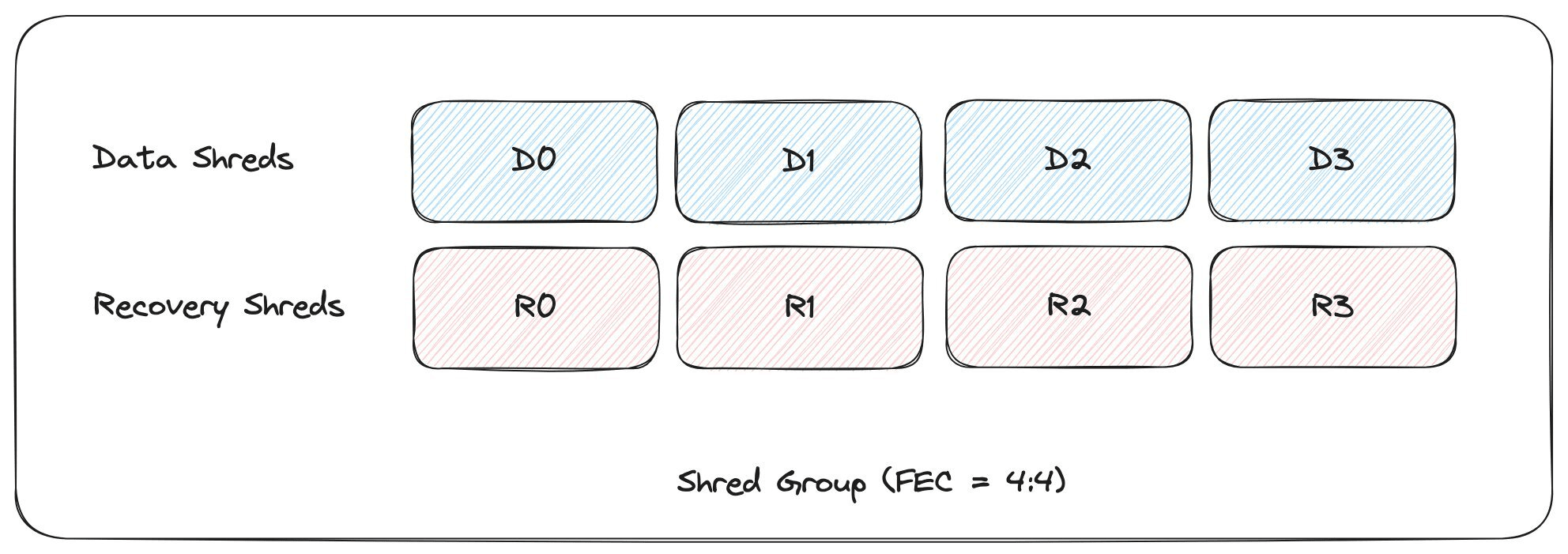

Before the fragments are propagated through the Turbine tree, they are first encoded using Reed-Solomon erasure coding (a polynomial-based error detection and correction scheme). Erasure coding is a data protection method that allows the original data to be recovered even if some parts are lost or damaged during transmission. Reed-Solomon erasure coding is a specific type of forward error correction (FEC) algorithm.

Since Turbine fundamentally relies on a series of packet retransmissions from downstream validators, these validators can be malicious (by choosing to replay incorrect data to antagonize Byzantine nodes) or receive incomplete data (network packet loss). Any network-wide packet loss is compounded by Turbine’s retransmission tree structure, and the probability of a packet failing to reach its destination increases with each hop.

At a high level, if the leader transmits 33% of a block's packets as erasure coded, then the network can drop any 33% of the packets without losing the block. The leader is able to dynamically adjust this number (the FEC rate) based on network conditions, taking into account variables such as recently observed network-wide packet loss and tree depth.

For simplicity, let’s examine the fragmentation group with FEC ratio of 4:4.

Example of a fragmented group: 4 out of 8 packets can be tampered or lost without affecting the original data

Data shards are partial blocks of the original block built by the leader, while recovery shards are erasure coded blocks generated by Reed-Solomon.

Blocks on Solana typically utilize 32:32 FEC (32 out of 64 packets can be lost without retransmission). As stated in the Solana documentation, the following are conservative network assumptions:

Packet loss rate 15%

50k TPS generates 6400 shards per second

A 32:32 FEC rate produces a block success rate of approximately 99%. Additionally, leaders have the power to increase the FEC rate if they choose to increase the probability of a block success.

Turbine currently uses UDP for block propagation, which provides a huge latency advantage. According to validator operators, transferring 6MB+ erasure coded data from us-east-1 to eu-north-1 takes 100ms using UDP, while TCP takes 900ms.

Turbine Tree

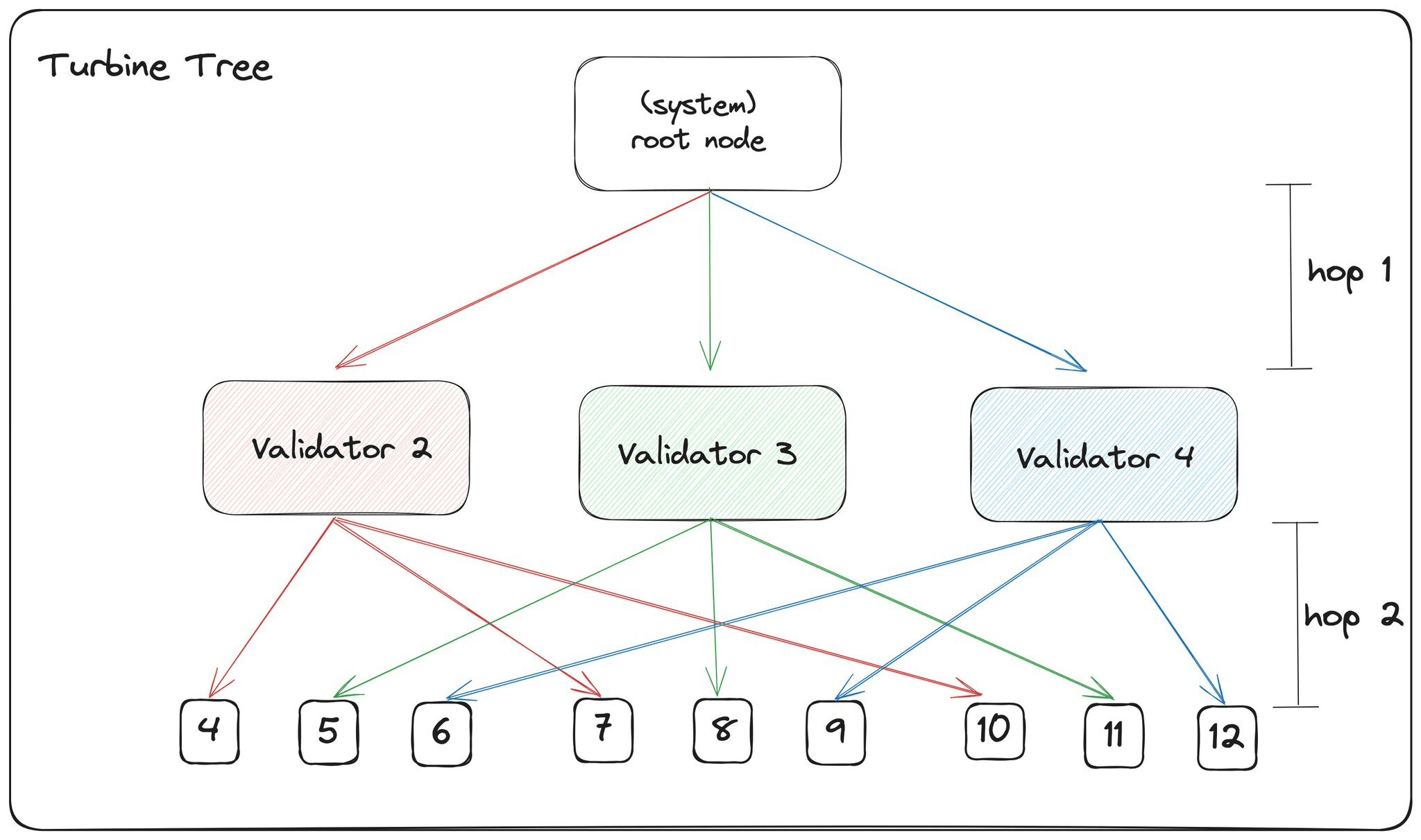

The Turbine tree is a structured network topology used by Solana to facilitate the efficient propagation of shards (encoded block data) between validators. Once the shards are correctly encoded into their respective shard groups, they can be propagated through the Turbine tree to inform other validators in the network of the latest state.

Each shard group is sent via a network packet to a special root node that manages which validators are part of the first layer (1 hop away). The following steps are then performed:

1. List creation: The root node aggregates all active validators into a list and then sorts them according to each validator's stake in the network. Validators with higher stake weights will be given priority to get faster fragments, allowing them to respond with their voting messages faster to reach consensus.

2. List Shuffling: This list is then shuffled in a deterministic manner. This generates a "Turbine Tree" from the set of validator nodes for each shard using a seed derived from the slot leader ID, slot, shard index, and shard type. A new tree is generated for each shard group at runtime to mitigate potential security risks associated with static tree structures.

3. Layer formation: The nodes are then divided into layers starting from the top of the list. The division is based on the DATA_PLANE_FANOUT value, which determines the width and depth of the turbo tree. This value affects how fast the fragments propagate through the network. Currently DATA_PLANE_FANOUT is 6.

The well-known Turbine tree ensures that each validator knows exactly where they are responsible for relaying that shard. Assuming the current DATA_PLANE_FANOUT value is 6, the Turbine tree is typically a 4 or 5 hop tree (depending on the number of active validators).

Additionally, nodes are able to fall back to gossip and repair if they do not get enough fragments or if the loss rate exceeds the FEC rate. Under the current implementation, nodes that lack enough fragments to rebuild a block send a retransmission request to the leader. Under Deterministic Turbine, any node that receives a complete block can send the repair fragments required by the requesting node, pushing data transfer further down the tree to the area requesting data.

Comparing Block Propagation Between Solana and Ethereum

Block propagation on Solana is different than Ethereum. Here are some high-level differences:

1. Solana’s ideal bandwidth requirements (>1 Gbps) are significantly higher than Ethereum (geth recommends >25 Mbps). This higher bandwidth requirement is due to Solana’s larger block size and faster block times. Solana is designed to efficiently utilize the entire bandwidth to speed up data transfer, thereby reducing latency. While bandwidth peaks up to 1 Gbps, 1 Gbps is not used consistently. Solana’s architecture specifically caters to peaks in bandwidth demand.

2. Solana uses Turbine for block data propagation, while Ethereum uses the standard gossip protocol. On Ethereum, block data propagation is done in a simple way: each node communicates with every other full node in the network. Once a new block is available, the client will verify it by sending it to its peers and approving the transactions in the block. This mechanism is suitable for Ethereum because it has a smaller block size and longer block time compared to Solana. When it comes to Ethereum L2 summary data (excluding validium), the propagation also follows the gossip protocol, and the block data is stored in the "calldata" field of Ethereum L1 blocks.

3. Ethereum uses TCP (via the DevP2P protocol) for block propagation, while Solana uses UDP (transitioning to QUIC with some community support). There are some trade-offs to consider between UDP and QUIC:

UDP's unidirectional nature results in lower latency compared to QUIC, hence the need for QUIC streams. Discussions on implementing unidirectional streams into QUIC are ongoing;

QUIC advocates claim that while custom control flows can be done over UDP, it requires a significant amount of engineering work, and QUIC alleviates this workload by natively supporting such features. The end goal is the same, but the upper limit of QUIC performance (latency, throughput, etc.) is the current state of pure UDP.

These differences highlight the unique architectural decisions made by Solana and Ethereum, which contribute to their respective performance, scalability, and network robustness. For a more in-depth analysis of TCP, UDP, and QUIC, check out our articles on Solana and QUIC.

Future Research Questions

Block propagation and data availability remain open areas of research, with many teams developing their own unique approaches. While metrics may continue to evolve, we want to provide an overview of different approaches and their associated tradeoffs:

1. Some discussion has surfaced about Turbine as a "data availability" (DA) mechanism. Turbine acts as a data availability mechanism, with the entire block data being published and downloaded by all other validators on Solana. Despite this, Turbine lacks support for Data Availability Sampling (DAS), a feature that can help light nodes verify state with reduced hardware requirements. This is an active development focus for teams such as Celestia. Like Turbine, DAS uses erasure coding, but does so with the explicit purpose of detecting and preventing data concealment attacks.

2. For Solana Virtual Machine (SVM) L2 (such as Eclipse), Tubrine loses relevance because there is no setup for validators to pass data between them. In the case of Eclipse, block data is published to Celestia for data availability - this enables external observers to run fraud proofs to ensure correct execution and state transitions. Eclipse will be one of the first implementations of SVM outside of the Solana network itself. Pyth has also forked SVM for its own oracle network "Pythnet" and effectively runs it as its own sidechain.

3. On Solana, full nodes manage block propagation while also participating in other parts of the integrated blockchain stack, such as transaction ordering and consensus. If Turbine runs on dedicated hardware as a modular component, what are its quantitative metrics?

4. Turbine prioritizes nodes with higher weights to receive block data first. Will this lead to more centralization of MEV over time?

5. How do different data availability approaches such as EigenDA (horizontally scalable single unicast relay) and Celestia (data availability sampling) compare to Turbine in production in terms of raw throughput and trust minimization?

6. Firedancer is designed to further increase data propagation and is optimized for robust 10 Gbps bandwidth connections. How will their system-level optimizations around Turbine perform in production on both consumer and professional hardware?

7. Currently, all nodes on Solana are full nodes (light client implementations are still in development). Sreeram Kannan (EigenLayer) recently described implementing DAS-S on top of Turbine. Will a version of DAS for Turbine be supported? Can light clients with DAS be implemented to maintain high data throughput while light clients (with much lower resource requirements) satisfy trust minimization?

in conclusion

Congratulations! In this article, we looked at Turbine and how it works within the broader area of transaction inclusion on Solana. We compared Turbine to other data availability solutions and discussed different research avenues open to this area. Solana’s Turbine protocol demonstrates the network’s commitment to achieving high throughput and low latency by leveraging a structured network topology to efficiently propagate block data between validators.

Finding ways to enhance data availability and make block propagation more efficient can drive innovation within the broader blockchain community. A comparative analysis of Solana and Ethereum’s block propagation mechanisms reveals their respective strengths and tradeoffs, and inspires deeper discussion on how emerging blockchain solutions such as EigenDA, Celestia, and Firedancer may shape this ecosystem in the future.

Solutions for efficient data dissemination and data availability are far from complete. However, Solana’s approach and strong commitment to optimizing network performance without compromising security and trust minimization are warmly welcomed.

Original link