Recently, while exploring the possibilities offered by artificial intelligence tools to analyze data from my final-year research, I was struck by how these technologies, although powerful, often incorporate a degree of uncertainty into their responses. This reminded me of my early experiences with Web3, where decentralization promised increased transparency, but where daily interactions already revealed limitations related to trust and information verification. These observations lead me to reflect on the convergence between AI and decentralized protocols, a rapidly evolving field that deserves nuanced attention.

At the heart of this discussion lies a systemic problem affecting the widespread adoption of AI: the reliability of the generated outputs. Current models, despite their advancements, are subject to hallucinations or biases inherent in the training data, posing risks in sensitive contexts such as professional decision-making or academic research. This is not merely a technical issue, but a structural flaw that erodes user trust and hinders the integration of AI into larger systems where accuracy is paramount. It is within this context that the Mira Network project emerges as a logical response, seeking to address these challenges through a decentralized approach. By combining blockchain principles with AI verification, it proposes a framework where reliability no longer depends on a central entity, but on distributed consensus. This appears to be a natural extension of the ideas underlying Web 3, where collective verification could mitigate the inherent weaknesses of traditional AI algorithms.

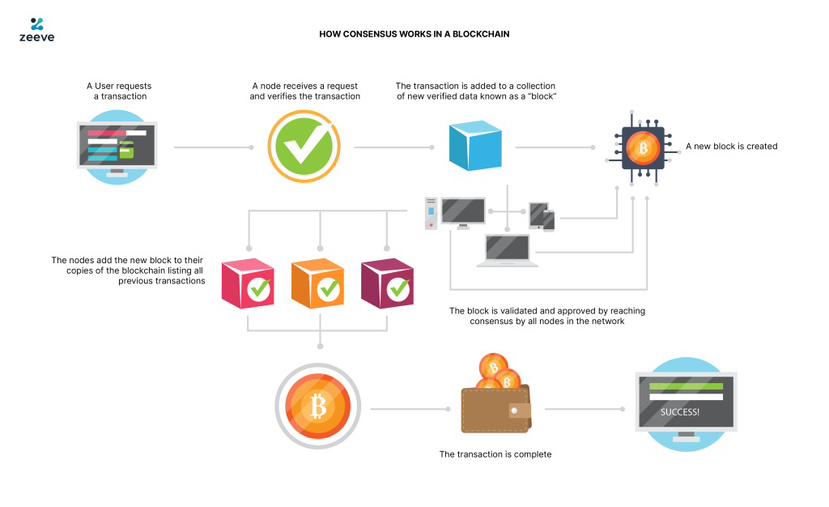

Technically, the system relies on a protocol that uses the blockchain to validate AI outputs through consensus. Node operators stake $MIRA tokens with a total supply of one billion and an initial circulation of approximately 19.12% on the Base chain to participate in this process. In exchange for their honest contribution to verification, they receive rewards, while penalties are applied for malicious behavior. The Verified Generate API and the Mira Flows marketplace facilitate this integration, aiming for over 95% accuracy by aggregating multiple validations, making the system more robust without requiring excessive centralization.

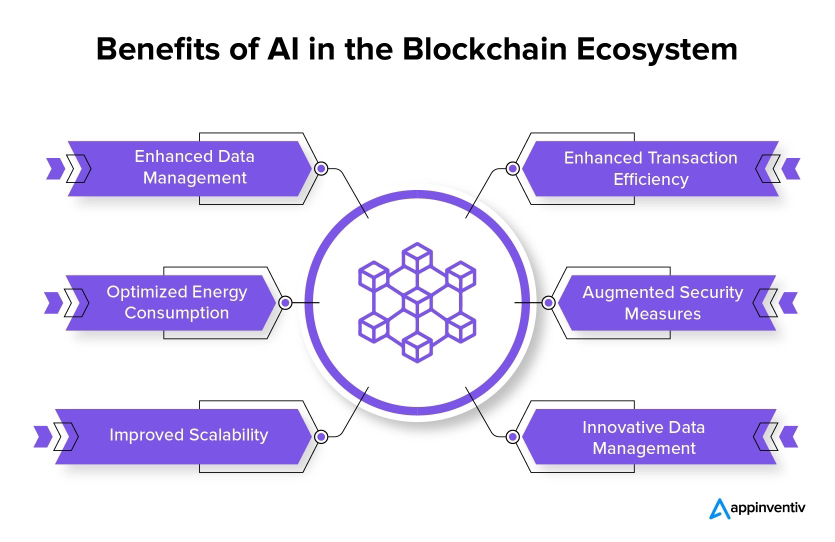

In the longer term, such a model could influence how we design digital infrastructure, fostering more accountable and scalable AI. Imagine applications where automated decisions are not only fast but also transparently audited, potentially reducing errors in sectors like finance and healthcare. This could encourage wider adoption by making AI less opaque and more aligned with societal needs, while also stimulating innovation around decentralized marketplaces for verified AI streams. [photo3] However, some realistic challenges should be noted. Reliance on node participation could raise scalability issues if the network grows too quickly, and penalty mechanisms, while effective deterrents, are not foolproof against coordinated attacks. Furthermore, integration with existing blockchains like Base involves considerations of energy consumption and token volatility, which could limit accessibility for some users. These limitations serve as a reminder that all technological innovation takes place within an imperfect ecosystem, requiring continuous adjustments.

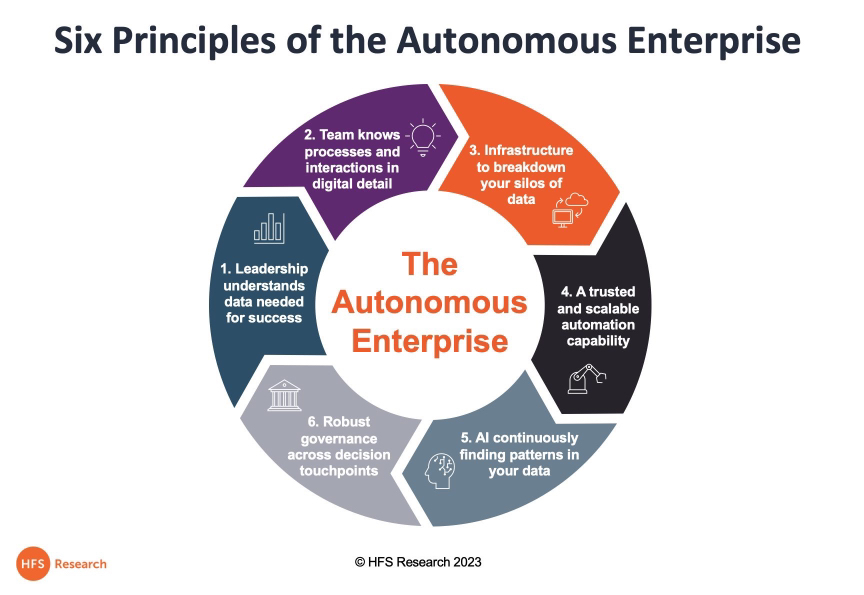

Ultimately, this exploration leads me to a broader reflection on the strategic challenges of trust, scalability, and autonomy in emerging technologies. Protocols like Mira could help build an infrastructural layer where AI and blockchain reinforce each other, fostering greater accountability without sacrificing innovation. This invites us to think not in terms of utopia, but of measured progress toward more resilient systems, where decentralized verification becomes a crucial pillar for navigating the complexities of the digital world.