The growing reliability problem in artificial intelligence

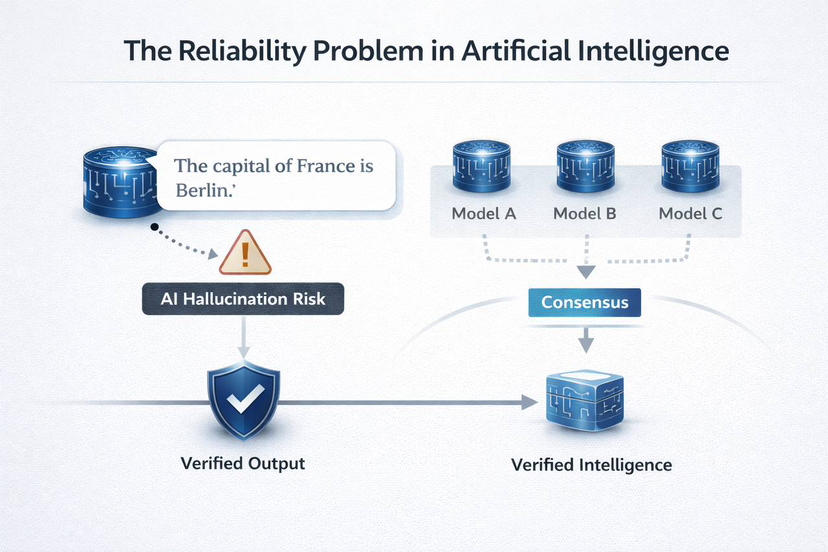

Over the last few years I have noticed something interesting about artificial intelligence. The technology is becoming incredibly powerful, but at the same time there is a quiet problem that most people do not talk about enough. AI can sound very confident even when it is wrong. Anyone who has used modern AI tools has probably experienced this. You ask a question and the system gives a clear answer, sometimes even with explanations and references. But later you realize that some part of the answer was incorrect.

This happens because most AI systems work by predicting the most likely next word rather than proving facts. They are extremely good at producing language that looks correct, but that does not mean the information is always accurate. This issue is often called “AI hallucination.” Even advanced models still produce wrong or biased information sometimes, which makes them difficult to trust in important areas like finance, education, healthcare or law.

Why verification might matter more than intelligence

The concept of Mira becomes more understandable once you consider the way humans confirm information. In science, one can hardly believe an assertion made by an individual. The statement is put to test by other researchers, recreating the experiment and verifying the evidence. It is not until this claim is proven again and again that it turns into accepted knowledge.

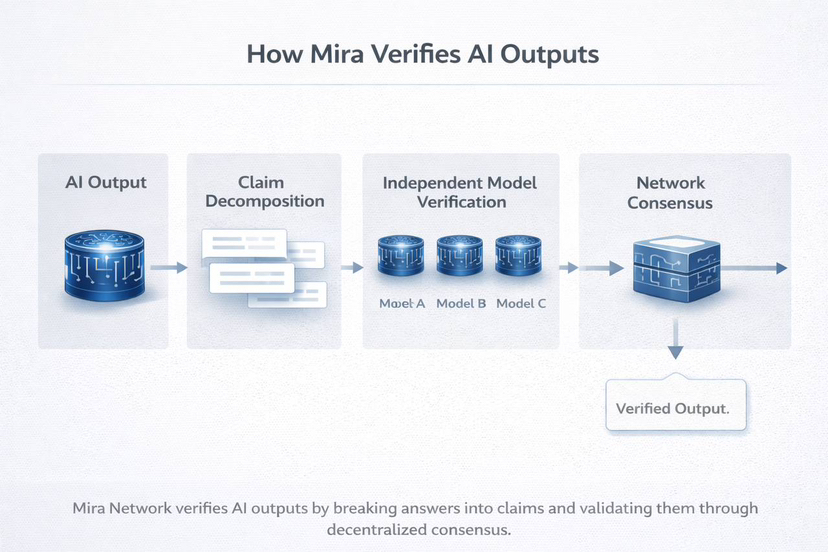

Mira attempts to transfer a comparable value to artificial intelligence. The model output of a single model is not trusted automatically in the network. It instead decomposes an AI answer into smaller claims that can be verified. These assertions are then submitted to a number of independent models or verification nodes which examine them individually.

What is interesting in this design is that it views truth as something that comes out of consensus among various attitudes as opposed to being something that is centrally interpreted.

The purpose of the decentralization of the system.

The other aspect which makes Mira stand out in my opinion is the manner in which its verification is arranged. The network does not rely on one company or centralized server to carry out the verification, but rather on a vast number of independent participants. These participants run various AI models and claims filed to the network.

This decentralized organization is important as it eliminates the chances of favor or manipulation. When verification is done by a single organization, there is a possibility that it may be biased as to what answers should be considered acceptable. However, once the number of independent validators is large, the outcome will be more balanced.

Simply stated, Mira is attempting to establish a trust layer with artificial intelligence.

Motives that make the system honest.

Incentives is one of the things that I never neglect when studying crypto projects. Technology is hardly sufficient. These systems tend to work, as individuals are punished who do not act straight.

Mira proposes a token incentive system which motivates the validators to submit the correct verification. Stakeholders are able to make bets to join the network and get a reward when they perform verification correctly. In case they offer negligent or untruthful verification, some of their stake can be lost.

Another approach of thinking AI infrastructure.

The less I consider Mira, the more it appears to be an infrastructure project and not another crypto token. It is not aiming at making a more excellent chatbot or a smarter model. Rather, it is concerned with something deeper: ensuring AI outputs are credible.

Mira is searching the concept of the fact that it is not just the intelligence that suffices. The thing we are in need of is the intelligence we can rely on.