Multicoin Capital Partner Kyle Samani elaborates on 7 reasons why modular blockchains are overvalued.

Written by: Kyle Samani, Partner, Multicoin Capital

Compiled by: Luffy, Foresight News

In the past two years, the blockchain scalability debate has focused on the central topic of "the debate between modularity and integration."

Note that discussions in cryptocurrency often conflate "single" and "integrated" systems. The technical debate about integrated systems versus modular systems spans 40 years. This conversation in the cryptocurrency space should be framed through the same lens as history, and this is far from a new debate.

When considering modularity versus integration, the most important design decision a blockchain can make is the extent to which the complexity of the stack is exposed to application developers. The customers of blockchain are application developers, so final design decisions should take their perspective into account.

Today, modularity is largely hailed as the primary way blockchains scale. In this article, I’ll challenge this assumption from first principles, uncover the cultural myths and hidden costs of modular systems, and share the conclusions I’ve drawn from thinking about this debate over the past six years.

Modular systems increase development complexity

By far the biggest hidden cost of modular systems is the added complexity of the development process.

Modular systems greatly increase the complexity that application developers must manage, both in the context of their own applications (technical complexity) and in the context of interactions with other applications (social complexity).

In the context of cryptocurrencies, modular blockchains theoretically allow for greater specialization, but at the cost of creating new complexity. This complexity (both technical and social in nature) is being passed on to application developers, ultimately making it more difficult to build applications.

For example, consider the OP Stack. As of now, it seems to be the most popular modular framework. OP Stack forces developers to choose between adopting the Law of Chains (which brings a lot of social complexity), or forking and managing them separately. Both options create significant downstream complexity for builders. If you choose to fork, will you receive technical support from other ecosystem participants (CEX, fiat on-ramp, etc.) who must incur costs to comply with new technology standards? If you choose to follow the Law of Chains, what rules and constraints will be imposed on you today and tomorrow?

Source: OSI model

Modern operating systems (OS) are large, complex systems containing hundreds of subsystems. Modern operating systems handle layers 2-6 in the diagram above. This is a classic example of integrating modular components to manage stack complexity exposed to application developers. Application developers don't want to deal with anything below layer 7, which is why operating systems exist: the operating system manages the complexity of the layers below so that application developers can focus on layer 7. Therefore, modularity should not be a goal in itself, but a means to an end.

Every major software system in the world today—cloud backends, operating systems, database engines, game engines, etc.—is highly integrated and composed of many modular subsystems. Software systems tend to be highly integrated to maximize performance and reduce development complexity. The same is true for blockchain.

Ethereum, by the way, is reducing the complexity that emerged during the 2011-2014 Bitcoin fork era. Modularity proponents often emphasize the Open Systems Interconnection (OSI) model, arguing that data availability (DA) and execution should be separated; however, this argument is widely misunderstood. A proper understanding of the issue at hand leads to the opposite conclusion: the argument that OSI is an integrated system rather than a modular system.

Modular chains cannot execute code faster

By design, a common definition of a "modular chain" is the separation of data availability (DA) and execution: one set of nodes is responsible for DA, while another set (or sets) of nodes are responsible for execution. The node collections do not have to have any overlap, but they can.

In practice, separating DA and execution does not inherently improve the performance of either; rather, some hardware somewhere in the world must perform DA, and some hardware somewhere must implement execution. Separating these functions does not improve the performance of any of them. While separation can reduce computational costs, it can only be reduced by centralizing execution.

To reiterate: regardless of modular or integrated architecture, some hardware somewhere has to do the work, and separating DA and execution onto separate hardware does not inherently speed up or increase overall system capacity.

Some argue that modularity allows multiple EVMs to run in parallel in a rollup fashion, allowing execution to scale horizontally. While this is theoretically correct, this view actually emphasizes the limitations of the EVM as a single-threaded processor rather than the basic premise of separating DA and execution in the context of scaling the overall system throughput.

Modularity alone does not improve throughput.

Modularity increases transaction costs for users

By definition, each L1 and L2 is an independent asset ledger with its own state. These separate pieces of state can communicate, albeit with longer transaction latencies and more complexity for developers and users (via cross-chain bridges like LayerZero and Wormhole).

The more asset ledgers there are, the more fragmented the global state of all accounts becomes. This is terrible for both chains and users across multiple chains. State fragmentation may bring a series of consequences:

Reduced liquidity, resulting in higher trading slippage;

More total gas consumption (cross-chain transactions require at least two transactions on at least two asset ledgers);

Increased double counting across asset ledgers (thus reducing overall system throughput): When the price of ETH-USDC moves on Binance or Coinbase, arbitrage opportunities will arise on every ETH-USDC pool across all asset ledgers (you can easily It's easy to imagine a world where every time the ETH-USDC price changes on Binance or Coinbase, there are 10+ transactions on the various asset ledgers. Keeping prices consistent in a fragmented state is extremely low on block space. efficient use).

It’s important to realize that creating more asset ledgers significantly increases costs across all of these dimensions, especially those associated with DeFi.

The primary input to DeFi is on-chain state (i.e. who owns which assets). When teams launch application chains/Rollups, they naturally create state fragmentation, which is very detrimental to DeFi, both for developers managing the complexity of the application (bridges, wallets, delays, cross-chain MEV, etc.), and for users (Slippage, settlement delays).

The most ideal condition for DeFi is that assets are issued on a single asset ledger and traded within a single state machine. The larger the asset ledger, the more complexity application developers must manage and the higher the cost users must bear.

App Rollup won’t create new monetization opportunities for developers

AppChain/Rollup proponents believe that incentives will lead application developers to develop Rollups rather than building on L1 or L2 so that applications can capture the MEV value themselves. However, this idea is flawed because running an application rollup is not the only way to capture MEV back into application layer tokens, and it is not the best way in most cases. Application layer tokens can capture MEV back into their own tokens simply by coding logic in smart contracts on the common chain. Let's consider a few examples:

Liquidation: If Compound or Aave DAO want to capture a portion of the MEV flowing to the liquidation bot, they can simply update their respective contracts so that a portion of the fees currently flowing to the liquidator are paid to their own DAO, without the need for a new chain/Rollup .

Oracle: Oracle tokens can capture MEV by providing back running services. In addition to price updates, oracles can also bundle any arbitrary on-chain transactions that are guaranteed to run immediately after a price update. Therefore, oracles can capture MEV by providing back running services to searchers, block builders, etc.

NFT Minting: NFT minting is rife with scalping bots. This can be easily mitigated by simply coding the reallocation of declining profits. For example, if someone attempts to resell their NFT within two weeks of the NFT being minted, 100% of the proceeds will go back to the issuer or DAO. This percentage may change over time.

There is no universal answer to capturing MEV into application layer tokens. However, with a little thought, application developers can easily capture MEV back into their own tokens on the universal chain. Launching a brand new chain is simply unnecessary and will bring additional technical and social complexity to developers, as well as more wallet and liquidity concerns to users.

Application Rollup cannot resolve cross-application congestion issues

Many believe that Application Chain/Rollup ensures that applications are not affected by gas spikes caused by other on-chain activities, such as popular NFT minting. This view is partly true, but mostly wrong.

This is a historical issue, and the root cause is the single-threaded nature of the EVM, not because there is no separation of DA and execution. All L2s pay a fee to L1, and L1 fees can be increased at any time. During the memecoin craze earlier this year, transaction fees on Arbitrum and Optimism briefly exceeded $10. Optimism’s fees have also recently skyrocketed following the launch of Worldcoin.

The only solutions to address fee spikes are: 1) maximize L1 DA, 2) refine the fee market as much as possible:

If L1 is resource constrained, usage spikes in individual L2s will be passed on to L1, which will impose higher costs on all other L2s. Therefore, application chain/Rollup is not immune to gas spikes.

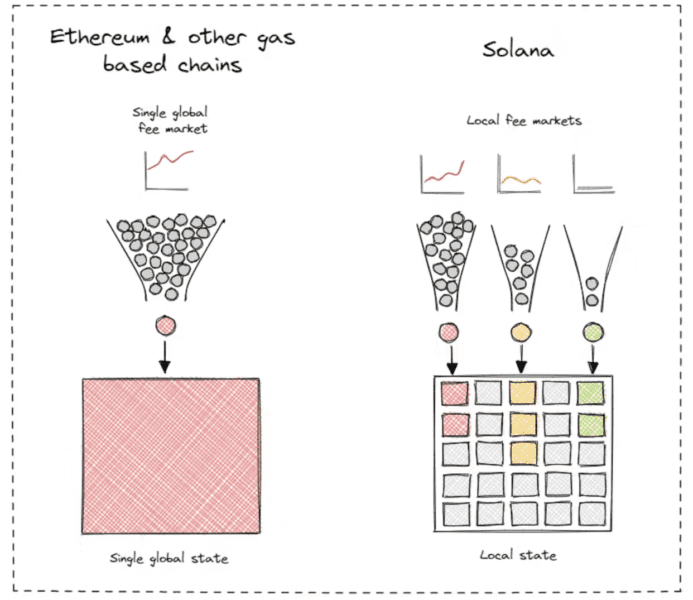

Coexistence of numerous EVM L2s is just a crude way to try out the localization fee market. It's better than putting the fee market in a single EVM L1, but it doesn't solve the core problem. When you realize that the solution is a localized fee market, the logical endpoint is a per-state fee market (rather than a per-L2 fee market).

Other chains have already reached this conclusion. Solana and Aptos naturally localize fee markets. This required extensive engineering work over many years for the respective execution environments. Most modular proponents severely underestimate the importance and difficulty of engineering local fee markets.

By launching multiple chains, developers are not unlocking real performance gains. When there are applications driving increased transaction volume, the costs of all L2 chains are affected.

Flexibility is overrated

Proponents of modular chains argue that modular architecture is more flexible. This statement is obviously true, but does it really matter?

For six years I've been trying to find application developers for whom a generic L1 couldn't provide meaningful flexibility. But so far, outside of three very specific use cases, no one has been able to articulate why flexibility is important and how it directly helps scale. Three specific use cases where I find flexibility is important are:

Applications that take advantage of the "hot" state. Hot state is state necessary to coordinate some set of operations in real time, but will only be submitted to the chain temporarily and will not exist forever. A few examples of thermal states:

Limit orders in DEXs such as dYdX and Sei (many limit orders end up being canceled).

Order flow is coordinated and identified in real-time in dFlow, a protocol that facilitates a decentralized order flow marketplace between market makers and wallets.

Oracles like Pyth, which is a low latency oracle. Pyth runs as a standalone SVM chain. Pyth generates so much data that the core Pyth team decided it was best to send high-frequency price updates to a standalone chain and then use Wormhole to bridge prices to other chains as needed.

Modify the consensus chain. The best examples are Osmosis (where all transactions are encrypted before being sent to validators), and Thorchain (where transactions within a block are prioritized based on fees paid).

An infrastructure is required that leverages Threshold Signature Scheme (TSS) in some way. Some examples of this are Sommelier, Thorchain, Osmosis, Wormhole, and Web3Auth.

With the exception of Pyth and Wormhole, all examples listed above are built using the Cosmos SDK and run as standalone chains. This speaks volumes about the applicability and scalability of the Cosmos SDK for all three use cases: hot states, consensus modifications, and Threshold Signature Scheme (TSS) systems.

However, most of the projects in the above three use cases are not applications, they are infrastructure.

Pyth and dFlow are not applications, they are infrastructure. Sommelier, Wormhole, Sei, and Web3Auth are not applications, they are infrastructure. Among them, there is only one specific type of user-facing application: DEX (dYdX, Osmosis, Thorchain).

For six years, I’ve been asking Cosmos and Polkadot supporters about the use cases that result from the flexibility they offer. I think there's enough data to make some inferences:

First of all, infrastructure examples should not exist as Rollups because they either produce too much low-value data (such as hot states, and the whole point of hot states is that the data is not committed back to L1), or because they perform something intentionally inconsistent with the assets on the ledger. Status update related functionality (for example, all TSS use cases).

Secondly, the only application I've seen that could benefit from changing the core system design is a DEX. Because DEX is flooded with MEV, and universal chains cannot match the latency of CEX. Consensus is the basis for transaction execution quality and MEV, so changes based on consensus will naturally bring many innovation opportunities to DEX. However, as mentioned earlier in this article, the main input to a spot DEX is the asset being traded. DEXs compete for assets and therefore asset issuers. Under this framework, independent DEX chains are unlikely to succeed because the primary issue asset issuers consider when issuing assets is not DEX-related MEV, but general smart contract functionality and the incorporation of this functionality into developers' respective applications. .

However, derivatives DEXs do not need to compete for asset issuers. They mainly rely on collateral such as USDC and oracle price feeds, and essentially must lock user assets to collateralize derivatives positions. So, in the sense of independent DEX chains, they are most likely to work for derivatives-focused DEXs like dYdX and Sei.

Let’s consider the common integrated L1 applications that exist today, including: games, DeSoc systems (such as Farcaster and Lens), DePIN protocols (such as Helium, Hivemapper, Render Network, DIMO, and Daylight), Sound, NFT exchanges, and more. None of these particularly benefit from the flexibility brought by modifying the consensus, and their respective asset ledgers have a fairly simple, obvious, and common set of requirements: low fees, low latency, access to spot DEXs, access to stablecoins, and access to fiat channels , such as CEX.

I believe we now have enough data to say to some extent that the vast majority of user-facing applications have the same general requirements enumerated in the previous paragraph. While some applications can optimize other variables at the margin with custom features in the stack, the trade-offs that come with these customizations are usually not worth it (more bridges, less wallet support, less indexing / Inquiry program support, reduction of legal currency channels, etc.).

Rolling out new asset ledgers is one way to achieve flexibility, but it rarely adds value and almost always introduces technical and social complexity with little ultimate benefit to application developers.

Extended DA does not require re-mortgaging

You'll also hear modular proponents talk about rehypothecation in the context of scaling. This is the most speculative argument made by modular chain proponents, but it’s worth discussing.

It roughly states that due to re-staking (e.g., through systems like EigenLayer), the entire crypto ecosystem can re-stake ETH an infinite number of times, empowering an unlimited number of DA layers (e.g., EigenDA) and execution layers. Therefore, while ensuring the appreciation of ETH value, scalability is solved from all aspects.

Despite the huge uncertainty between the current situation and the theoretical future, we take it for granted that all stratification assumptions work as advertised.

Ethereum’s DA is currently around 83 KB/s. With the introduction of EIP-4844 later this year, that speed can roughly double to about 166 KB/s. EigenDA can add an additional 10 MB/s, but requires a different set of security assumptions (not all ETH will be re-collateralized to EigenDA).

By comparison, Solana currently offers DA of about 125 MB/s (32,000 shreds per block, 1,280 bytes per shred, 2.5 blocks per second). Solana is much more efficient than Ethereum and EigenDA. Additionally, Solana's DA expands over time according to Nelson's law.

There are many ways to extend DA through remortgaging and modularization, but these mechanisms are simply not necessary today and introduce significant technical and social complexities.

Built for application developers

After years of thinking about it, I've come to the conclusion that modularity should not be a goal in itself.

Blockchain must serve its customers (i.e. application developers), therefore, blockchain should abstract infrastructure-level complexity so that developers can focus on building world-class applications.

Modularity is great. But the key to building winning technology is figuring out which parts of the stack need to be integrated and which parts are left to others. For now, integrating DA and execution chains inherently provides a simpler end-user and developer experience and will ultimately provide a better foundation for best-in-class applications.

Original link

This article is reprinted from Foresight News with permission

This article Venture Capital Institution Multicoin Partner: Why Modular Blockchain Is Overvalued first appeared on Zombit.