Author: Yiping, IOSG Ventures

Written in front

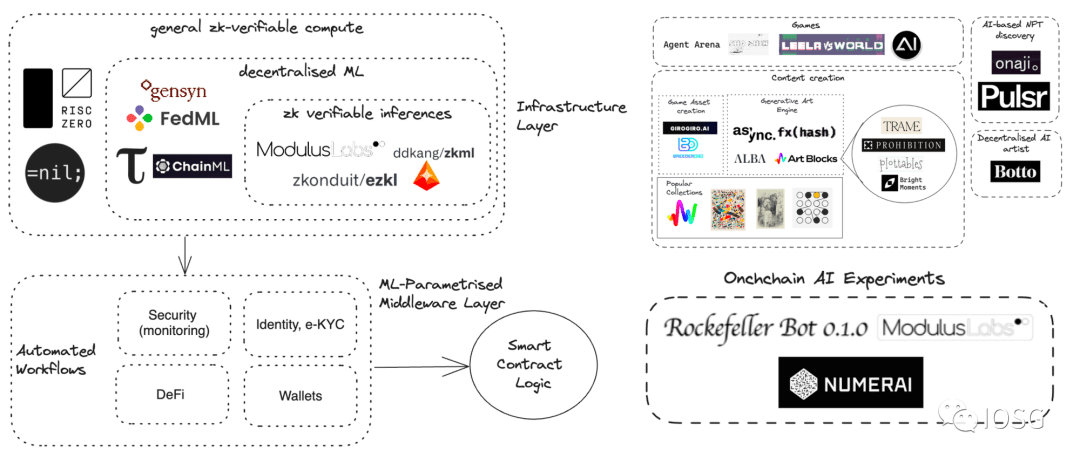

As large language models (LLM) are gaining momentum, we are seeing a number of projects integrating artificial intelligence (AI) and blockchain. As LLM and blockchain are increasingly combined, we are also seeing opportunities for AI to be re-integrated with blockchain. One of the most noteworthy projects is zero-knowledge machine learning (ZKML).

Artificial intelligence and blockchain are two transformative technologies with fundamentally different characteristics. Artificial intelligence requires powerful computing power, which is usually provided by centralized data centers. Blockchain provides decentralized computing and privacy protection, but performs poorly in tasks that require large-scale computing and storage. We are still exploring and researching the best practices for integrating artificial intelligence and blockchain, and will introduce some current "AI + blockchain" project cases to you in the future.

Source: IOSG Ventures

This research report is divided into two parts. This article is the first part. We will focus on the application of LLM in the encryption field and explore the strategy for application implementation.

What is LLM?

LLM (Large Language Model) is a computerized language model consisting of an artificial neural network with a large number of parameters (usually billions). These models are trained on large amounts of unlabeled text.

Around 2018, the birth of LLM revolutionized the study of natural language processing. Unlike previous methods that required training specific supervised models for specific tasks, LLM, as a general model, performs well on a variety of tasks. Its capabilities and applications include:

Understanding and summarizing text: LLMs can understand and summarize large amounts of human language and text data. They can extract key information and generate concise summaries.

Generate new content: LLM has the ability to generate text-based content. By providing prompts to the model, it can answer questions, newly generated text, summarization, or sentiment analysis.

Translation: LLMs can be used to translate between different languages. They use deep learning algorithms and neural networks to understand the context and relationships between words.

Predicting and generating text: LLMs can predict and generate text based on context, similar to human-generated content, including songs, poems, stories, marketing materials, etc.

Applications in various fields: Large language models have wide applicability in natural language processing tasks. They are used in many fields such as conversational AI, chatbots, healthcare, software development, search engines, tutoring, writing tools, etc.

The advantages of LLM include its ability to make sense of large amounts of data, its ability to perform a variety of language-related tasks, and its potential to customize results based on user needs.

Common large-scale language model applications

Due to its outstanding natural language understanding capabilities, LLM has considerable potential, and developers mainly focus on the following two aspects:

Provide users with accurate and up-to-date answers based on a wealth of contextual data and content

Complete specific tasks assigned by users by using different agents and tools

It is these two aspects that have led to the mushrooming of LLM applications that chat with XX. For example, chat with PDF, chat with documents, and chat with academic papers.

Subsequently, people tried to integrate LLM with various data sources. Developers have successfully integrated platforms such as Github, Notion, and some note-taking software with LLM.

To overcome the inherent limitations of LLM, different tools were incorporated into the system. The first such tool was a search engine that provided LLM with access to the latest knowledge. Further progress will integrate tools such as WolframAlpha, Google Suites, and Etherscan with large language models.

LLM Apps Architecture

The following diagram outlines the process of LLM application in responding to user queries: First, relevant data sources are converted into embedding vectors and stored in the vector database. The LLM adapter uses the user query and similarity search to find relevant context from the vector database. The relevant context is put into prompts and sent to LLM. LLM will execute these prompts and use tools to generate answers. Sometimes, LLM is tuned on specific data sets to improve accuracy and reduce costs.

The workflow of LLM application can be roughly divided into three main stages:

Data preparation and embedding: This phase involves preserving confidential information (such as project memos) for future access. Typically, the documents are segmented, processed through an embedding model, and saved in a special type of database called a vector database.

Prompt Formulation and Extraction: When a user submits a search request (in this case, searching for project information), the software creates a series of prompts that are input into the language model. The final prompt usually contains a prompt template hard-coded by the software developer, a valid output example as a few-shot example, and any required data obtained from an external API and related files extracted from the vector database.

Prompt execution and inference: Once prompts are completed, they are fed into pre-existing language models for inference, which may include proprietary model APIs, open source, or individually fine-tuned models. At this stage, some developers may also incorporate operational systems such as logging, caching, and validation into the system.

Bringing LLM to the crypto space

Although the crypto space (Web3) has some similar applications to Web2, developing excellent LLM applications in the crypto space requires special caution.

The crypto ecosystem is unique, with its own culture, data, and integration. LLMs fine-tuned on these crypto-specific datasets can provide superior results at a relatively low cost. While data is abundantly available, there is a distinct lack of open datasets on platforms like HuggingFace. Currently, there is only one dataset related to smart contracts, which contains 113,000 smart contracts.

Developers also face the challenge of integrating different tools into LLM. These tools are different from those used in Web2, which give LLM the ability to access transaction-related data, interact with decentralized applications (Dapps), and perform transactions. So far, we have not found any Dapp integration in Langchain.

Although developing high-quality crypto LLM applications may require additional investment, LLM is a natural fit for the crypto field. This field provides rich, clean, and structured data. In addition, Solidity code is usually concise and clear, which makes it easier for LLM to generate functional code.

In the second part, we will discuss 8 potential directions in which LLM can help the blockchain field, such as:

Integrate built-in AI/LLM capabilities into blockchain

Analyzing Transaction Records Using LLM

Identifying potential bots using LLM

Writing code using LLM

Reading code with LLM

Helping the community with LLM

Tracking the Market with LLM

Analyzing Projects with LLM