Original author: YBB Capital Zeke

Preface

On February 16, OpenAI announced its latest text-controlled video generation diffusion model "Sora", which demonstrated another milestone in generative AI through multiple high-quality generated videos covering a wide range of visual data types. Unlike AI video generation tools such as Pika, which are still in the state of generating a few seconds of video using multiple images, Sora achieves scalable video generation by training in the compressed latent space of videos and images, decomposing them into spatiotemporal position patches. In addition, the model also demonstrates the ability to simulate the physical and digital worlds. The final 60-second demo is not an exaggeration to say that it is a "universal simulator of the physical world."

In terms of construction method, Sora continues the technical path of "source data-Transformer-Diffusion-emergence" of the previous GPT model, which means that its development and maturity also requires computing power as an engine, and because the amount of data required for video training is much larger than the amount of data for text training, the demand for computing power will be further increased. However, we have already discussed the importance of computing power in the AI era in our earlier article "Prospects of Potential Tracks: Decentralized Computing Power Market", and with the recent rise in the popularity of AI, a large number of computing power projects have begun to emerge on the market, and other Depin projects (storage, computing power, etc.) that passively benefited have also ushered in a wave of surges. So in addition to Depin, what kind of sparks can the interweaving of Web3 and AI collide with? What opportunities are there in this track? The main purpose of this article is to update and supplement past articles, and to think about the possibilities of Web3 in the AI era.

Three major directions in the history of AI development

Artificial Intelligence (AI) is an emerging science and technology that aims to simulate, expand and enhance human intelligence. Since its birth in the 1950s and 1960s, AI has become an important technology that promotes changes in social life and all walks of life after more than half a century of development. In this process, the intertwined development of the three major research directions of symbolism, connectionism and behaviorism has become the cornerstone of the rapid development of AI today.

Symbolism

Also known as logicism or rule-based theory, it believes that it is feasible to simulate human intelligence by processing symbols. This method uses symbols to represent and manipulate objects, concepts and their relationships in the problem domain, and uses logical reasoning to solve problems. It has made remarkable achievements in expert systems and knowledge representation. The core view of symbolism is that intelligent behavior can be achieved through the manipulation of symbols and logical reasoning, where symbols represent a high degree of abstraction of the real world;

Connectionism

Also known as the neural network method, it aims to achieve intelligence by imitating the structure and function of the human brain. This method achieves learning by building a network composed of many simple processing units (similar to neurons) and adjusting the connection strength between these units (similar to synapses). Connectionism places special emphasis on the ability to learn and generalize from data, and is particularly suitable for pattern recognition, classification, and continuous input-output mapping problems. Deep learning, as a development of connectionism, has made breakthroughs in image recognition, speech recognition, and natural language processing.

Behaviorism

Behaviorism is closely related to the study of bionic robotics and autonomous intelligent systems, emphasizing that intelligent agents can learn through interaction with the environment. Unlike the previous two, behaviorism does not focus on simulating internal representations or thinking processes, but on achieving adaptive behavior through a cycle of perception and action. Behaviorism believes that intelligence is demonstrated through dynamic interaction and learning with the environment. This approach is particularly effective when applied to mobile robots and adaptive control systems that need to act in complex and unpredictable environments.

Although there are essential differences between these three research directions, in actual AI research and applications, they can also interact and integrate to jointly promote the development of the AI field.

AIGC Principle Overview

Generative AI (Artificial Intelligence Generated Content, AIGC), which is currently experiencing explosive growth, is an evolution and application of connectionism. AIGC can imitate human creativity to generate novel content. These models are trained using large data sets and deep learning algorithms to learn the underlying structures, relationships, and patterns in the data. Based on the user's input prompts, novel and unique output results are generated, including images, videos, codes, music, designs, translations, question answers, and texts. The current AIGC is basically composed of three elements: Deep Learning (DL), big data, and large-scale computing power.

Deep Learning

Deep learning is a subfield of machine learning (ML), and deep learning algorithms are neural networks modeled after the human brain. For example, the human brain contains millions of interconnected neurons that work together to learn and process information. Similarly, deep learning neural networks (or artificial neural networks) are composed of multiple layers of artificial neurons working together inside a computer. Artificial neurons are software modules called nodes that use mathematical calculations to process data. Artificial neural networks are deep learning algorithms that use these nodes to solve complex problems.

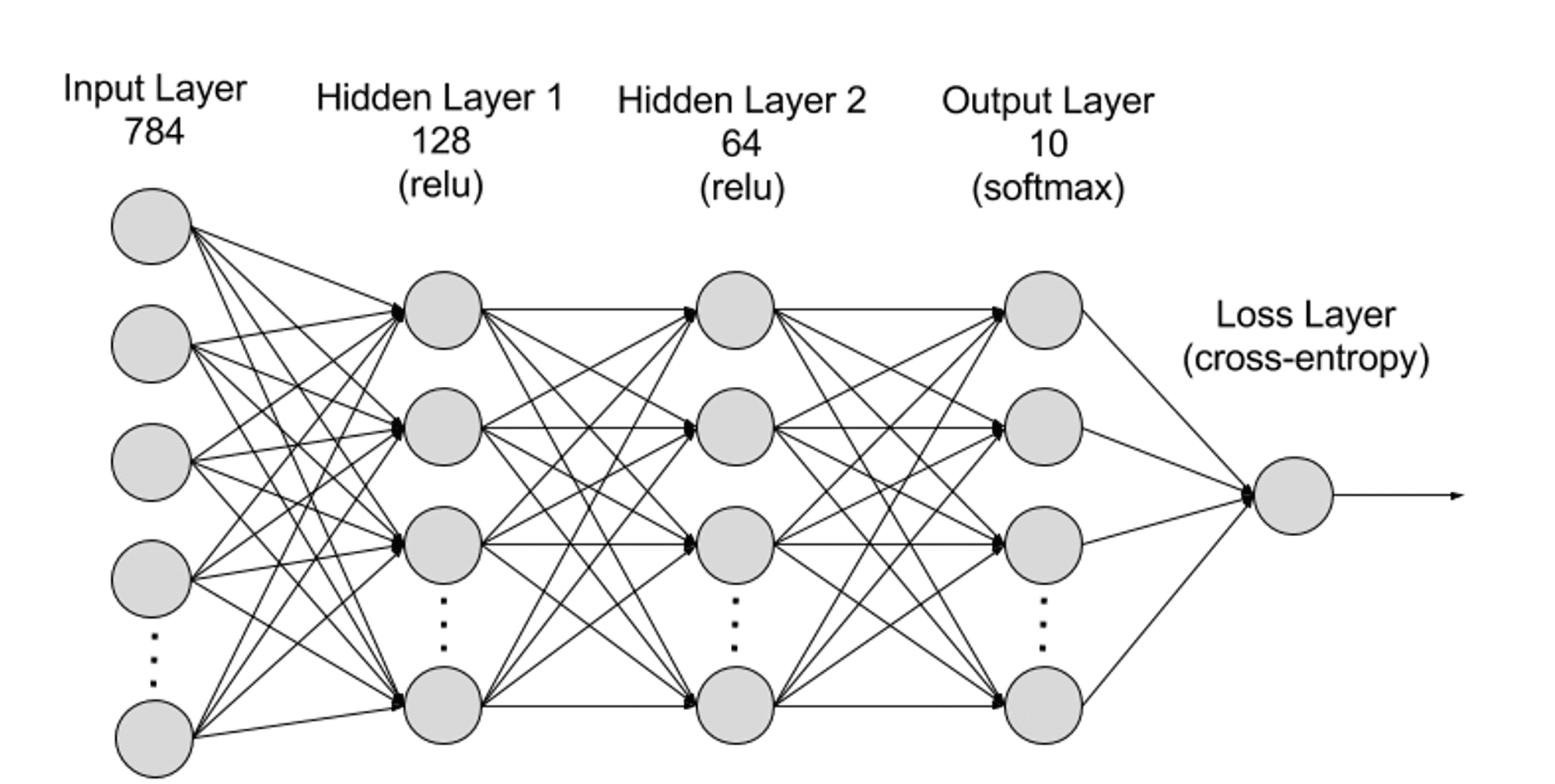

From a hierarchical perspective, neural networks can be divided into input layer, hidden layer, and output layer, and the connections between different layers are parameters.

Input Layer: The input layer is the first layer of the neural network and is responsible for receiving external input data. Each neuron in the input layer corresponds to a feature of the input data. For example, when processing image data, each neuron may correspond to a pixel value of the image;

Hidden Layer: The input layer processes the data and passes it to further layers in the neural network. These hidden layers process information at different levels and adjust their behavior when they receive new information. Deep learning networks have hundreds of hidden layers and can be used to analyze problems from many different angles. For example, if you get an image of an unknown animal that must be classified, you can compare it to animals you already know. For example, you can tell what animal it is by the shape of the ears, the number of legs, and the size of the pupils. The hidden layers in a deep neural network work in the same way. If a deep learning algorithm is trying to classify an image of an animal, each of its hidden layers will process different features of the animal and try to accurately classify it;

Output Layer: The output layer is the last layer of the neural network and is responsible for generating the output of the network. Each neuron in the output layer represents a possible output category or value. For example, in a classification problem, each output layer neuron may correspond to a category, while in a regression problem, the output layer may have only one neuron whose value represents the predicted result;

Parameters: In a neural network, the connections between different layers are represented by weights and biases, which are optimized during the training process to enable the network to accurately identify patterns in the data and make predictions. Increasing parameters can increase the model capacity of the neural network, that is, the model's ability to learn and represent complex patterns in the data. However, the corresponding increase in parameters will increase the demand for computing power.

Big Data

In order to be effectively trained, neural networks usually require large amounts of diverse, high-quality data from multiple sources. It is the basis for training and validating machine learning models. By analyzing big data, machine learning models can learn patterns and relationships in the data to make predictions or classifications.

Large-scale computing power

The multi-layered complex structure of neural networks, a large number of parameters, big data processing requirements, iterative training methods (during the training phase, the model needs to be iterated repeatedly, and each layer of calculation needs to be forward propagated and backward propagated during the training process, including the calculation of activation functions, loss functions, gradients, and weight updates), high-precision computing requirements, parallel computing capabilities, optimization and regularization techniques, and model evaluation and verification processes all lead to the need for high computing power.

Sora

As OpenAI's latest video generation AI model, Sora represents a huge step forward in AI's ability to process and understand diverse visual data. By using video compression networks and spatial-temporal patching technology, Sora is able to convert massive amounts of visual data from all over the world and captured by different devices into a unified form of expression, thereby achieving efficient processing and understanding of complex visual content. Relying on the text-conditioned Diffusion model, Sora is able to generate highly matching videos or pictures based on text prompts, showing extremely high creativity and adaptability.

However, despite Sora's breakthroughs in video generation and simulation of real-world interactions, it still faces some limitations, including the accuracy of physical world simulation, the consistency of long video generation, the understanding of complex text instructions, and the efficiency of training and generation. In addition, Sora essentially continues the old technical path of "big data-Transformer-Diffusion-emergence" through OpenAI's monopoly-level computing power and first-mover advantage to achieve a kind of violent aesthetics. Other AI companies still have the possibility of overtaking through technical curves.

Although Sora has little to do with blockchain, I personally believe that in the next one or two years, the impact of Sora will force other high-quality AI generation tools to emerge and develop rapidly, and will radiate to multiple tracks within Web3, such as GameFi, social networking, creative platforms, Depin, etc., so it is necessary to have a general understanding of Sora. How AI will be effectively combined with Web3 in the future may be a key point we need to think about.

Four major paths of AI x Web3

As mentioned above, we know that the underlying foundation required for generative AI is actually only three points: algorithm, data, and computing power. On the other hand, from the perspective of versatility and generation effect, AI is a tool to subvert the mode of production. The biggest role of blockchain is two points: reconstruction of production relations and decentralization. Therefore, I personally think that there are four paths that can be generated by the collision of the two:

Decentralized computing power

Since relevant articles have been written in the past, the main purpose of this paragraph is to update the status of the computing power track. When it comes to AI, computing power is always a link that cannot be avoided. AI's demand for computing power is so great that it is unimaginable after the birth of Sora. Recently, during the 2024 World Economic Forum in Davos, Switzerland, OpenAI CEO Sam Altman bluntly stated that computing power and energy are the biggest shackles at this stage, and their importance in the future will even be equivalent to currency. On February 10, Sam Altman published an extremely amazing plan on Twitter to raise $7 trillion (equivalent to 40% of China's 23-year national GDP) to rewrite the current global semiconductor industry landscape and create a chip empire. When writing articles related to computing power, my imagination was still limited to national blockades and giant monopolies. It is really crazy for a company to want to control the global semiconductor industry.

Therefore, the importance of decentralized computing power is self-evident. The characteristics of blockchain can indeed solve the current problem of extreme monopoly of computing power and the high price of purchasing dedicated GPUs. From the perspective of AI requirements, the use of computing power can be divided into two directions: reasoning and training. There are still very few projects that focus on training. From the need to combine decentralized networks with neural network design to the extremely high demand for hardware, it is destined to be a direction with extremely high barriers and extremely difficult implementation. Reasoning is relatively simple. On the one hand, it is not complicated in the design of decentralized networks, and on the other hand, the hardware and bandwidth requirements are relatively low, which is considered to be a more mainstream direction at present.

The imagination space of the centralized computing power market is huge, and it is often linked to the keyword "trillion-level". It is also the most frequently hyped topic in the AI era. However, judging from the large number of projects that have emerged recently, most of them are still rushed to the market to catch the heat. They always hold high the correct banner of decentralization, but keep silent about the inefficiency of decentralized networks. In addition, there is a high degree of homogeneity in design, and a large number of projects are very similar (one-click L2 plus mining design), which may eventually lead to a mess. In this case, it is really difficult to get a share of the traditional AI track.

Algorithm and model collaboration system

Machine learning algorithms are algorithms that can learn rules and patterns from data and make predictions or decisions based on them. Algorithms are technology-intensive because their design and optimization require deep expertise and technological innovation. Algorithms are at the core of training AI models, and they define how data is transformed into useful insights or decisions. Common generative AI algorithms include generative adversarial networks (GANs), variational autoencoders (VAEs), and transformers. Each algorithm is designed for a specific field (such as painting, language recognition, translation, video generation) or purpose, and then a dedicated AI model is trained through the algorithm.

So with so many algorithms and models, each with its own merits, can we integrate them into a model that can do both? Bittensor, which has been gaining popularity recently, is the leader in this direction. Through mining incentives, different AI models and algorithms can collaborate and learn from each other, thereby creating a more efficient and versatile AI model. Other projects that also focus on this direction include Commune AI (code collaboration), but algorithms and models are the magic weapons of today's AI companies and will not be lent out at will.

Therefore, the narrative of AI collaborative ecology is novel and interesting. The collaborative ecosystem uses the advantages of blockchain to integrate the disadvantages of AI algorithm islands, but whether it can create corresponding value is still unknown. After all, the closed-source algorithms and models of leading AI companies have very strong capabilities for updating, iteration and integration. For example, OpenAI has been developed for less than two years and has iterated from early text generation models to multi-domain generation models. Projects such as Bittensor may have to find another way in the fields targeted by models and algorithms.

Decentralized Big Data

From a simple perspective, using private data to feed AI and labeling data are both directions that fit well with blockchain. We only need to pay attention to how to prevent junk data and evil, and data storage can also benefit Depin projects such as FIL and AR. From a complex perspective, using blockchain data for machine learning (ML) to solve the accessibility of blockchain data is also an interesting direction (one of Giza's exploration directions).

In theory, blockchain data is always accessible and reflects the state of the entire blockchain. But for those outside the blockchain ecosystem, it is not easy to access this huge amount of data. Storing a complete blockchain requires extensive expertise and a large amount of specialized hardware resources. To overcome the challenges of accessing blockchain data, several solutions have emerged in the industry. For example, RPC providers access nodes through APIs, while indexing services make data extraction possible through SQL and GraphQL, both of which play a key role in solving the problem. However, these approaches have limitations. RPC services are not suitable for high-density use cases that require large amounts of data queries and often fail to meet the needs. At the same time, although indexing services provide a more structured way to retrieve data, the complexity of Web3 protocols makes it extremely difficult to construct efficient queries, sometimes requiring writing hundreds or even thousands of lines of complex code. This complexity is a huge obstacle for general data practitioners and those who are not deeply familiar with the details of Web3. The cumulative effect of these limitations highlights the need for a more accessible way to obtain and utilize blockchain data, which can promote wider applications and innovation in the field.

Then, by combining ZKML (zero-knowledge proof machine learning, which reduces the burden of machine learning on the chain) with high-quality blockchain data, it may be possible to create a data set that solves the accessibility of blockchain. AI can significantly lower the threshold for blockchain data accessibility. Over time, developers, researchers, and ML enthusiasts will be able to access more high-quality, relevant data sets to build effective and innovative solutions.

AI empowers Dapp

Since ChatGPT 3 became popular in 2023, AI empowering Dapp has become a very common direction. Generative AI, which is extremely versatile, can be accessed through APIs to simplify and intelligently analyze data platforms, trading robots, blockchain encyclopedias and other applications. On the other hand, it can also play the role of a chatbot (such as Myshell) or an AI companion (Sleepless AI), and even create NPCs in blockchain games through generative AI. However, due to the low technical barriers, most of them are fine-tuned after accessing an API, and the integration with the project itself is not perfect, so it is rarely mentioned.

But after Sora's arrival, I personally think that the direction of AI empowering GameFi (including the metaverse) and the creative platform will be the focus of attention in the future. Because of the bottom-up nature of the Web3 field, it is certainly difficult to produce products that can compete with traditional games or creative companies, and the emergence of Sora is likely to break this dilemma (perhaps in just two to three years). Judging from Sora's Demo, it already has the potential to compete with micro-short drama companies, and the active community culture of Web3 can also give birth to a large number of interesting Ideas. When the only constraint is imagination, the barriers between the bottom-up industry and the top-down traditional industry will be broken.

Conclusion

With the continuous advancement of generative AI tools, we will experience more epoch-making "iPhone moments" in the future. Although many people scoff at the combination of AI and Web3, I actually think that most of the current directions are fine. There are actually only three pain points that need to be solved: necessity, efficiency, and fit. Although the integration of the two is in the exploratory stage, it does not prevent this track from becoming the mainstream of the next bull market.

It is our necessary mentality to always maintain enough curiosity and acceptance towards new things. Historically, the transition from cars to horse-drawn carriages was a foregone conclusion in an instant. Just like inscriptions and NFTs in the past, holding too many prejudices will only lead to missed opportunities.