原文标题:《Protocol and staking pool changes that could improve decentralization and reduce consensus overhead》

By: Vitalik Buterin

Compiled by: bayemon.eth, ChainCatcher

Special thanks to Mike Neuder, Justin Drake and others for their feedback and reviews. See also: Mike Neuder, Dankrad Feist, and arixon.eth’s earlier posts on similar topics.

The current development status of Ethereum can be said to include a large number of double-tiered staking. The double-tiered staking mentioned here refers to a staking model with two types of participants.

Node Operator: Operate a node and pledge your reputation or a certain amount of your own capital as collateral

Delegator: Delegators pledge a certain amount of Ethereum, with no minimum amount and no additional restrictions on other ways of participation besides collateral

This emerging dual staking is generated by a large number of participating staking pools that provide Liquidity Staking Tokens (LST). (Rocket Pool and Lido are both of this model).

However, the current double staking has two flaws:

Centralization risk of node operators: The selection mechanism for node operators in all current staking pools is still overly centralized

Unnecessary consensus burden: Ethereum L1 has to verify about 800,000 signatures per Epoch, which is a huge load for a single slot. In addition, since the liquidity staking pool requires more funds, the network itself does not fully benefit from this load. Therefore, if the Ethereum network can achieve reasonable decentralization and security without requiring each staker to sign according to the time period, then the community can adopt such solutions, thereby effectively reducing the number of signatures per time period.

This article will describe solutions to the above two problems. First, assuming that most of the capital is held by those who are unwilling to personally manage the staking nodes in the current form, sign information on each slot, lock deposits and redistribute them to those whose funds have been slashed, then in this case, what role can these people still play so as to make meaningful contributions to the decentralization and security of the network?

How does double staking work currently?

The two most popular staking pools are Lido and RocketPool. In the case of Lido, the two parties involved are:

Node Operator: Elected by Lido DAO, which means that it is actually elected by LDO holders. When someone deposits ETH into the Lido smart contract system, stETH is created, and the node operator can put it into the staking pool (but because the withdrawal voucher is bound to the smart contract address, the operator cannot withdraw it at will)

Agent: When someone deposits ETH in the Lido smart contract system, stETH is generated, and the node operator can use it as collateral (but because the withdrawal voucher is bound to the smart contract address, the operator cannot withdraw it at will)

For Rocket Pool, they are:

Node Operator: Anyone can become a node operator by submitting 8 ETH and a certain number of RPL tokens.

Agents: When someone deposits ETH into the Rocket Pool smart contract system, rETH is generated, which the node operator can use as collateral (also because the withdrawal voucher is bound to the smart contract address, the operator cannot withdraw it at will).

Agent Role

In these systems (or new systems enabled by potential future protocol changes), a key question to ask is: what is the point of having a proxy from a protocol perspective?

To understand the profound significance of this question, let us first think about the protocol changes mentioned in the post, which is to limit the slashing penalty to 2ETH. Rocket Pool will also reduce the stake amount of node operators to 2ETH, and Rocket Pool’s market share will increase to 100%/(for stakers and ETH holders, as rETH becomes risk-free, almost all ETH holders will become rETH holders or node operators).

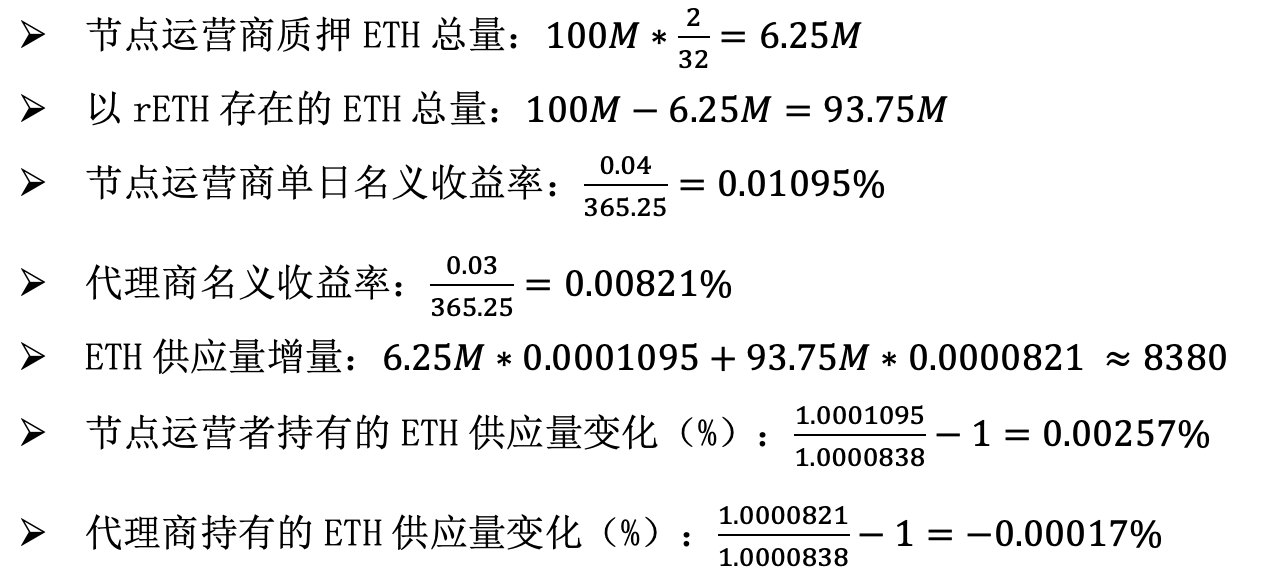

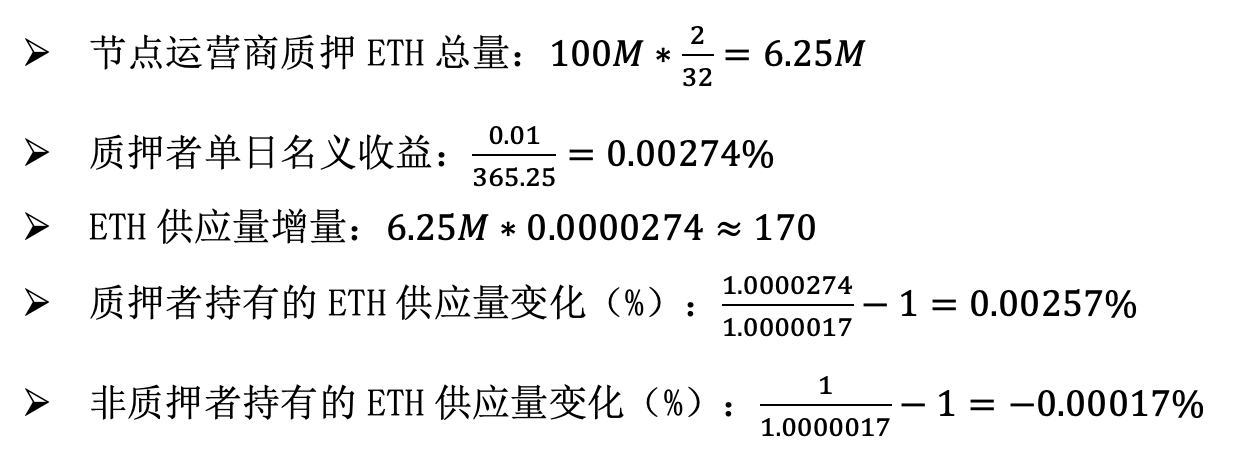

Assume a 3% return for rETH holders (including protocol rewards and priority fees + MEV) and a 4% return for node operators. We also assume that the total supply of ETH is 100 million.

The calculation results are as follows. In order to avoid compound interest calculation, we will calculate the income on a daily basis:

Now, assuming that Rocket Pool does not exist, the minimum deposit amount for each staker is reduced to 2 ETH, the total liquidity is capped at 6.25 million ETH, and the node operator return rate is reduced to 1%. Let's calculate again:

Consider these two scenarios from the perspective of the cost of the attack. In the first scenario, the attacker will not sign up as an agent, since agents do not inherently have any withdrawal rights, so there is no point. Therefore, they will stake all their ETH and become a node operator. To reach 1/3 of the total staked amount, they need to stake 2.08 million Ethereum (to be fair, this is still a considerable number. In the second scenario, the attacker only needs to stake funds, and to reach 1/3 of the total staked pool, they still need to stake 2.08 million Ethereum.

From the perspective of staking economics and attack costs, the end result of both scenarios is exactly the same. The share of the total ETH supply held by node operators increases by 0.00256% per day, and the share of the total ETH supply held by non-node operators decreases by 0.00017% per day. The attack cost is 2.08 million ETH. Therefore, in this model, the agent seems to have become a meaningless Rube Goldberg machine, and the rational community may even prefer to remove the middleman, significantly reduce the staking rewards, and limit the total amount of staked ETH to 6.25 million.

Of course, this article is not advocating a 4x reduction in staking rewards while capping the total amount of staking at 6.25 million. Instead, the point of this article is that a key attribute of a well-functioning staking system is that agents should bear important responsibilities in the entire system. Moreover, if agents are largely motivated to take the right actions by community pressure and altruism, that is fine; after all, this is the main force that motivates people to implement decentralized, high-security staking solutions today.

Agent's Responsibilities

If agents could play a meaningful role in a staking system, what might that role be?

I think there are two types of answers:

Proxy selection: Proxy operators can choose which node operators to delegate their stake to. The "weight" of a node operator in the consensus mechanism is proportional to the total stake delegated to them. Currently, the proxy selection mechanism is still limited, i.e. rETH or stETH holders can withdraw their ETH and switch to a different pool, but the actual usability of proxy selection can be greatly improved.

Participation in the consensus mechanism: Delegators can choose to play a certain role in the consensus mechanism, with "lighter" responsibilities than full subscription, and without a long exit period and slashing risk, but can still serve as a check and balance for node operators.

Enhanced agency selection

There are three ways to increase the power of representative selection:

Improved voting tools in pools

Increase competition between pools

Fixed representation

Currently, voting in the pool is not actually practical: in Rocket Pool, anyone can become a node operator, and in Lido, voting is determined by LDO holders, not ETH holders. Lido has proposed a proposal for dual governance of LDO + stETH, where they can activate a protection mechanism that blocks new votes and thus prevents node operators from being added or removed, which in a way gives stETH holders a say. Still, this power is limited and can be stronger.

Cross-pool competition already exists today, but is relatively weak. The main challenge is that smaller staking pools have lower liquidity, are less trustworthy, and are less supported by applications.

We can improve the first two issues by limiting the penalty amount to a smaller amount, such as 2 or 4 ETH. The remaining ETH can then be safely deposited and immediately withdrawn, allowing two-way redemption to still be valid for smaller staking pools. We can improve the third issue by creating a general issuance contract that is used to manage LST (similar to the contracts that ERC-4337 and ERC-6900 use for wallets) so that we can guarantee that any staked tokens issued through this contract are safe.

Currently, there is no solidified representation power in the protocol, but this type of scenario seems possible for the future. It would involve similar logic to the above idea, but implemented at the protocol level. For the pros and cons of solidifying things, see this post.

These ideas are improvements over the status quo, but they are limited in the advantages they can offer. Token voting governance is problematic, and ultimately any form of non-incentivized proxy selection is just a form of token voting; this has always been my main gripe with Delegated Proof of Stake. Therefore, it is also valuable to consider more robust ways to participate in consensus.

Consensus participation

Even without considering the current problems with liquidity staking, there are limitations to current independent staking methods. Assuming single-slot finality, each slot might ideally handle about 100,000 to 1,000,000 BLS signatures. Even if we use recursive SNARKs to aggregate signatures, each signature needs to be given a bitfield of participants for traceability. If Ethereum becomes a global-scale network, fully decentralized storage of bitfields will not be enough: 16 MB in each slot can only support about 64 million stakers.

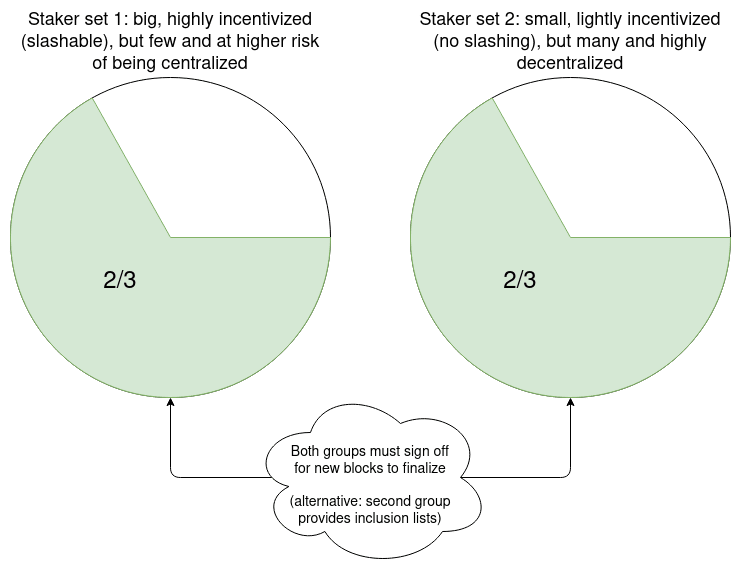

From this perspective, there is value in separating staking into a higher complexity slashable tier, which is active every slot but may only have 10,000 participants, and a lower complexity tier that is only called upon occasionally to participate. The lower complexity tier could be exempt from slashing entirely, or participants could be randomly given the opportunity to make a deposit and be subject to slashing in a few slots.

In practice, this can be accomplished by increasing the validator balance cap and subsequently increasing the balance threshold (e.g., 2048 ETH) to determine which existing validators move into higher or lower complexity tiers.

Here are some suggestions for how these small stake roles might work:

For each slot, 10,000 small stakers are randomly selected, who can sign what they believe to be representative of the slot. The LMD GHOST fork selection rule is run using the small stakers as input. If there is a certain disagreement between the fork choice driven by the small stakers and the fork choice driven by the node operator, the user's client will not accept any block as final confirmation and will display an error. This forces the community to intervene to resolve the situation.

Proxies can send transactions to announce to the network that they are online and willing to act as small stakers for the next hour. The computation of messages (blocks or proofs) sent by nodes requires both the node and a randomly selected proxy to sign the node's confirmation message.

Proxies can send transactions announcing to the network that they are online and willing to act as small stakers for the next hour. Each epoch, 10 random proxies are selected as inclusion list providers, and 10,000 more proxies are selected as voters. These are selected k-slots ago, and are given a k-slot window to publish messages on-chain confirming that they are online. Each confirmed selected inclusion list provider can publish an inclusion list, and unless for each inclusion list, either the transactions in that inclusion list are included, or a vote from a generally selected voter is included, indicating that the inclusion list is unavailable, the block will be considered invalid.

What these small staked nodes have in common is that they do not need to actively participate in every slot, and even light nodes can do all the work. Therefore, node deployment only needs to verify the consensus layer, and node operators can do it through applications or browser plug-ins, which are mostly passive and require little computing overhead, hardware requirements or technical know-how, and do not even require advanced technologies like ZK-EVM.

These “little guys” all share a common goal: preventing 51% majority node operators from censoring transactions. The first and second also prevent the majority from participating in finality restoration. The third is more directly concerned with censorship, but it is more susceptible to the choices of the majority node operators.

These ideas are written from the perspective of a double staking solution implemented in the protocol, but they can also be implemented as a feature of a staking pool. Here are some specific implementation ideas:

From the perspective of the protocol, each validator can set two staking keys: a continuous staking key P, and a bound and callable Ethereum address, and output a quick staking key Q. The node tracks the signature information of the fork selection in P, and the signed information is represented by Q. If the PQ storage results are inconsistent, the finalization of any block will not be accepted, and the liquidity pool will be responsible for randomly selecting representatives.

The protocol can remain largely unchanged, but the validator's public key for that period will be set to P+Q. Note that for staking, two staking messages may have different Q keys, but they will have the same P key; the staking design needs to handle this case.

The Q key can only be used in the protocol to sign and verify inclusion lists in blocks. In this case, Q can be a smart contract instead of a single key, so staking pools can use it to implement more complex voting logic, accepting inclusion lists from randomly selected providers or enough votes to indicate that inclusion lists are unavailable.

in conclusion

If implemented correctly, this tweak to the proof-of-stake design can solve two problems in one fell swoop:

Providing those who don’t have the resources or ability to run independent proof-of-stake today an opportunity to participate in proof-of-stake, thereby retaining more power in their hands: both (i) the power to choose which nodes to support and (ii) the power to actively participate in consensus in a way that is less expensive than operating a full proof-of-stake node, but still meaningful. Not all participants will choose one or both of these options, but any participant who does will see a significant improvement over the status quo.

Reduce the number of signatures that the Ethereum consensus layer needs to process in each slot, even with single-slot finality, to a smaller number like ~10,000. This will also help with decentralization, making it easier for everyone to run a validating node.

For these solutions, the solution to the problem can be found at different levels of abstraction: permissions granted to users within the proof-of-stake protocol, user selection between proof-of-stake protocols, and setup within the protocol. This choice should be carefully considered, and it is usually best to choose a minimum viable setup that minimizes the complexity of the protocol and the degree of change to the protocol economics while still achieving the desired goals.