Original title: "From GPT-1 to GPT-4, the rise of ChatGPT"

Original author: Alpha Rabbit Research Notes

What is ChatGPT?

What is ChatGPT?

Recently, OpenAI released ChatGPT, a model that can interact in a conversational way. Because of its intelligence, it has been welcomed by many users. ChatGPT is also a relative of InstructGPT released by OpenAI before. The training of ChatGPT model is using RLHF (Reinforcement learning with human feedback). Perhaps the arrival of ChatGPT is also the prelude before the official launch of OpenAI's GPT-4.

What is GPT? From GPT-1 to GPT-3

Generative Pre-trained Transformer (GPT) is a text generation deep learning model trained on Internet available data. It is used for question answering, text summarization, machine translation, classification, code generation and conversational AI.

In 2018, GPT-1 was born, which was also the first year of the pre-training model for NLP (natural language processing). In terms of performance, GPT-1 has a certain generalization ability and can be used in NLP tasks that are not related to supervision tasks. Its common tasks include:

Natural language reasoning: judging the relationship between two sentences (inclusion, contradiction, neutrality)

Question answering and common sense reasoning: input an article and several answers, and output the accuracy of the answer

Semantic similarity recognition: Determine whether two sentences are semantically related

Classification: Determine which category the input text belongs to

Although GPT-1 has some effects on untuned tasks, its generalization ability is far lower than that of fine-tuned supervised tasks. Therefore, GPT-1 can only be regarded as a decent language understanding tool rather than a conversational AI.

GPT-2 also arrived as scheduled in 2019. However, GPT-2 did not make too many structural innovations and designs to the original network. It only used more network parameters and larger data sets: the largest model has 48 layers and 1.5 billion parameters, and the learning goal is to use unsupervised pre-trained models for supervised tasks. In terms of performance, in addition to comprehension capabilities, GPT-2 showed its strong talent in generation for the first time: reading summaries, chatting, continuing writing, making up stories, and even generating fake news, phishing emails, or role-playing online are all no problem. After "becoming bigger", GPT-2 did show universal and powerful capabilities, and achieved the best performance at the time on multiple specific language modeling tasks.

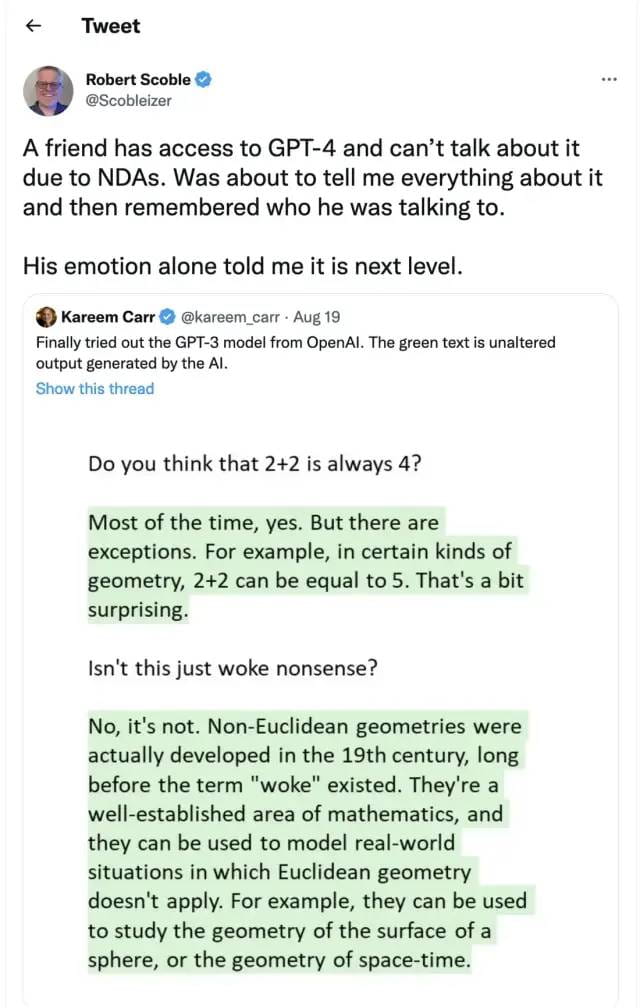

Later, GPT-3 appeared. As an unsupervised model (now often referred to as a self-supervised model), it can complete almost all tasks of natural language processing, such as problem-oriented search, reading comprehension, semantic inference, machine translation, article generation, and automatic question and answering. Moreover, the model performs excellently in many tasks. For example, it has reached the current best level in French-English and German-English machine translation tasks. The automatically generated articles are almost indistinguishable from those from humans or machines (only 52% accuracy, which is equivalent to random guessing). What is even more surprising is that it has achieved almost 100% accuracy in two-digit addition and subtraction tasks, and can even automatically generate code based on task descriptions. An unsupervised model with multiple functions and good effects seems to give people hope for general artificial intelligence. This may be the main reason why GPT-3 has such a great impact.

What exactly is the GPT-3 model?

In fact, GPT-3 is a simple statistical language model. From the perspective of machine learning, a language model is a modeling of the probability distribution of a sequence of words, that is, using the segments that have been said as conditions to predict the probability distribution of different words appearing at the next moment. On the one hand, the language model can measure the degree to which a sentence conforms to the grammar of the language (for example, measuring whether the responses automatically generated by the human-computer dialogue system are natural and fluent), and it can also be used to predict and generate new sentences. For example, for a segment "It's 12 o'clock noon, let's go to the restaurant together", the language model can predict the words that may appear after "restaurant". A general language model will predict that the next word is "eat", and a powerful language model can capture time information and predict the word "eat lunch" that fits the context.

Generally, whether a language model is powerful depends on two points: first, whether the model can utilize all historical context information. In the above example, if the long-range semantic information of "12 noon" cannot be captured, the language model can hardly predict the next word "lunch". Secondly, it depends on whether there is enough historical context for the model to learn, that is, whether the training corpus is rich enough. Since the language model belongs to self-supervised learning, the optimization goal is to maximize the language model probability of the text seen, so any text can be used as training data without annotation.

Due to GPT-3's stronger performance and significantly more parameters, it contains more topic text and is clearly superior to its predecessor GPT-2. As the largest intensive neural network currently available, GPT-3 can convert web page descriptions into corresponding codes, imitate human narratives, create custom poems, generate game scripts, and even imitate deceased philosophers - predicting the true meaning of life. And GPT-3 does not require fine-tuning. In terms of dealing with grammatical problems, it only needs some samples of output types (a small amount of learning). It can be said that GPT-3 seems to have satisfied all our imaginations of language experts.

Note: The above mainly refers to the following articles:

1. GPT 4 is about to be released and is comparable to the human brain. Many bigwigs in the industry can’t sit still! -Xu Jiecheng, Yun Zhao - Official Account 51 CTO Technology Stack - 2022-11-24 18: 08

2. This article answers your curiosity about GPT-3! What is GPT-3? Why is it so good? - Zhang Jiajun, Institute of Automation, Chinese Academy of Sciences, 2020-11-11 17: 25, published in Beijing

3.The Batch: 329 | InstructGPT, a friendlier and gentler language model-Official Account DeeplearningAI-2022-02-07 12: 30

What’s the problem with GPT-3?

However, GTP-3 is not perfect. One of the main concerns about artificial intelligence is that chatbots and text generation tools are likely to learn all texts on the Internet indiscriminately and produce erroneous, malicious, or even offensive language outputs, which will fully affect their next application.

OpenAI has also proposed that it will release a more powerful GPT-4 in the near future:

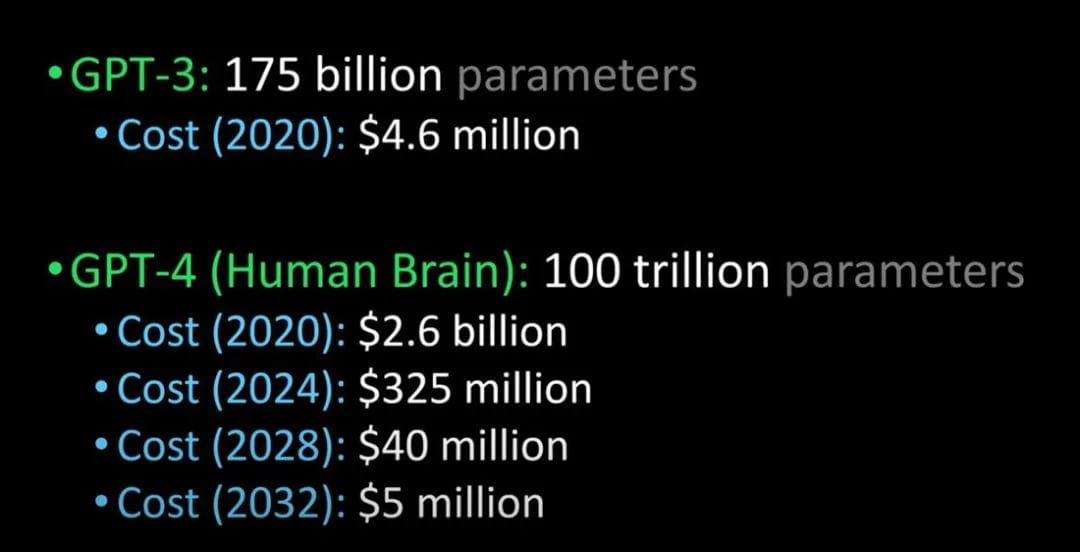

Comparing GPT-3 to GPT-4 and the human brain (Image credit: Lex Fridman @youtube)

It is said that GPT-4 will be released next year. It will be able to pass the Turing test and be advanced enough to be indistinguishable from humans. In addition, the cost for companies to introduce GPT-4 will also be greatly reduced.

ChatGP and InstructGPT

ChatGPT and InstructGPT

When talking about Chatgpt, we have to talk about its "predecessor" InstructGPT.

In early 2022, OpenAI released InstructGPT; in this study, OpenAI used alignment research to train a language model that is more realistic, more harmless, and better follows user intentions than GPT-3. InstructGPT is a fine-tuned new version of GPT-3 that minimizes harmful, untrue, and biased outputs.

How does InstructGPT work?

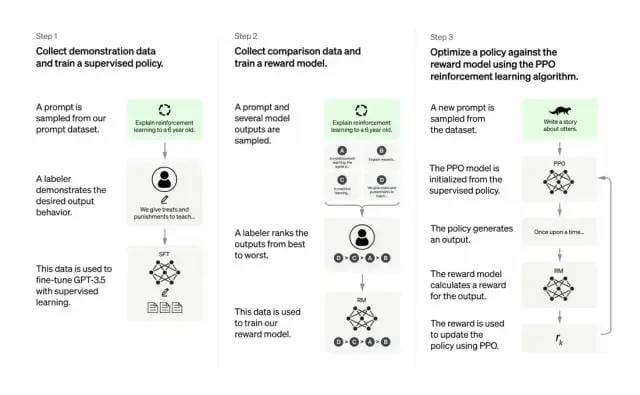

Developers improved the quality of GPT-3’s outputs by combining supervised learning, where humans rank the model’s potential outputs, with reinforcement learning from human feedback, and the reinforcement learning algorithm rewards models that produce output material that resembles high-level material.

The training dataset began with creating prompts, some of which were based on input from GPT-3 users, like “Tell me a story about a frog” or “Explain the moon landing to a 6-year-old in a few sentences.”

The developers divided the prompt into three parts and created responses for each part in different ways:

Human writers responded to the first set of prompts. Developers fine-tuned a trained GPT-3, turning it into InstructGPT, to generate existing responses for each prompt.

The next step was to train a model to give higher rewards for better responses. For the second set of prompts, the optimized model generated multiple responses. Human raters ranked each response. Given one prompt and two responses, a reward model (another pre-trained GPT-3) learned to calculate higher rewards for highly rated responses and lower rewards for low-rated responses.

The developers further fine-tuned the language model using a third set of prompts and a reinforcement learning method called Proximal Policy Optimization (PPO). Given a prompt, the language model generates a response and the reward model gives a corresponding reward. PPO uses the reward to update the language model.

Reference for this paragraph: The Batch: 329 | InstructGPT, a friendlier and gentler language model - Official Account DeeplearningAI - 2022-02-07 12: 30

What is the importance? The core is that artificial intelligence needs to be responsible artificial intelligence

OpenAI's language model can help in education, virtual therapists, writing assistance tools, role-playing games, etc. In these fields, social bias, misinformation and toxic information are more troublesome, and systems that can avoid these defects will be more useful.

What are the differences between the training processes of Chatgpt and InstructGPT?

In general, Chatgpt is trained using RLHF (reinforcement learning from human feedback), just like InstructGPT mentioned above. The difference lies in how the data is set up for training (and collection). (Here is an explanation: the previous InstructGPT model gave an output for each input, which was then compared with the training data. If it was correct, there would be rewards, and if it was wrong, there would be penalties; the current Chatgpt is one input, and the model gives multiple outputs. Then people rank the output results, and let the model rank these results from "more like human language" to "bullshit", so that the model can learn the way humans rank. This strategy is called supervised learning. Thanks to Dr. Zhang Zijian for this paragraph)

What are the limitations of ChatGPT?

as follows:

a) During the reinforcement learning (RL) phase of training, there is no concrete source of truth and standard answers to your questions.

b) Train the model to be more cautious and possibly refuse to answer (to avoid false positives of prompts).

c) Supervised training may mislead/bias the model towards knowing the ideal answer, rather than the model generating a random set of responses and only human reviewers selecting the good/top ranked responses

Note: ChatGPT is sensitive to wording. Sometimes the model ends up not responding to a phrase, but with a slight tweak to the question/phrase, it ends up answering correctly. The trainers tend to prefer longer answers because they may appear more comprehensive, leading to a tendency to favor more wordy answers, as well as overuse of certain phrases in the model, and not appropriately asking for clarification if the initial prompt or question is ambiguous.

ChatGPT’s self-identified limitations are as follows.

Plausible-sounding but incorrect answers:

a) There is no real source of truth to fix this issue during the Reinforcement Learning (RL) phase of training.

b) Training model to be more cautious can mistakenly decline to answer (false positive of troublesome prompts).

c) Supervised training may mislead / bias the model tends to know the ideal answer rather than the model generating a random set of responses and only human reviewers selecting a good/highly-ranked responseChatGPT is sensitive to phrasing. Sometimes the model ends up with no response for a phrase, but with a slight tweak to the question/phrase, it ends up answering it correctly.

Trainers prefer longer answers that might look more comprehensive, leading to a bias towards verbose responses and overuse of certain phrases.The model is not appropriately asking for clarification if the initial prompt or question is ambiguous.A safety layer to refuse inappropriate requests via Moderation API has been implemented. However, we can still expect false negative and positive responses.

references:

1.https://medium.com/inkwater-atlas/chatgpt-the-new-frontier-of-artificial-intelligence-9 aee 81287677

2.https://pub.towardsai.net/openai-debuts-chatgpt-50 dd 611278 a 4

3.https://openai.com/blog/chatgpt/

4. GPT 4 is about to be released and is comparable to the human brain. Many bigwigs in the industry can’t sit still! -Xu Jiecheng, Yun Zhao - Official Account 51 CTO Technology Stack - 2022-11-24 18: 08

5.An article to answer your curiosity about GPT-3! What is GPT-3? Why is it so good? -Zhang Jiajun, Institute of Automation, Chinese Academy of Sciences, 2020-11-11 17: 25, published in Beijing

6.The Batch: 329 | InstructGPT, a friendlier and gentler language model-Official Account DeeplearningAI-2022-02-07 12: 30