There’s a point in most Web3 games where the illusion breaks. It doesn’t happen immediately. At first, everything feels engaging, even rewarding. But after a while, the pattern shows up. Actions stop feeling like choices and start feeling like calculations. You’re not really playing anymore. You’re extracting.

I expected the same thing going into @Pixels . At first, I thought it was just another farming loop with better design and smoother onboarding. Do tasks, earn rewards, optimize routes, repeat. It looked familiar enough to assume the same outcome. Another system where efficiency quietly replaces enjoyment.

But something didn’t fully line up. Players weren’t collapsing into a single optimal strategy. Some inefficiencies stayed. Some actions didn’t maximize output. And yet, they persisted. That usually doesn’t happen in a pure reward loop. It suggests the system isn’t just paying for activity. It’s selecting for something deeper.

Most GameFi economies fail at the incentive layer. Not because gameplay is weak, but because the system rewards the wrong behavior. Fixed, predictable rewards turn everything into a calculation. And once that happens, the dominant strategy becomes extraction. Bots and optimized players don’t just exploit the system, they become it.

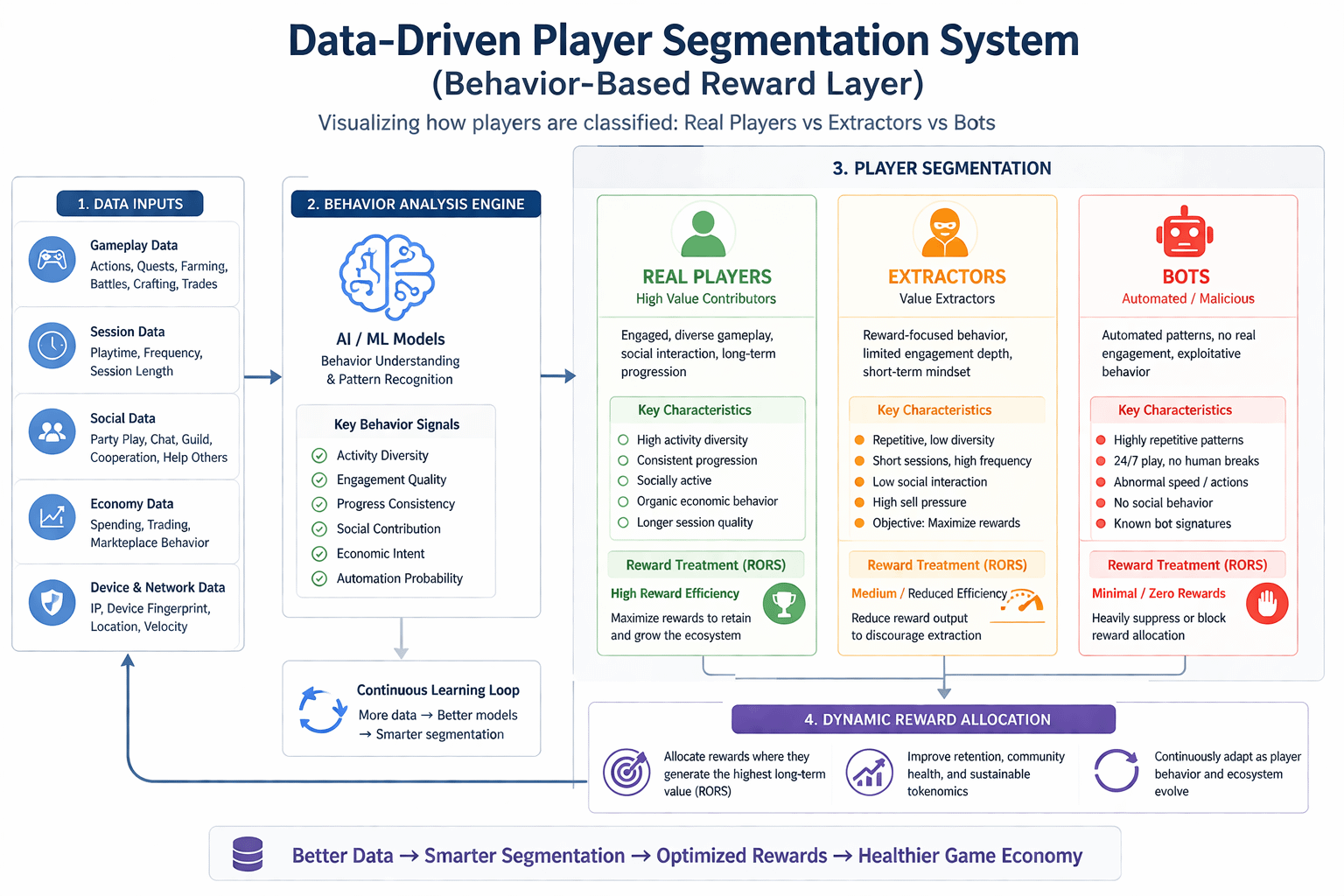

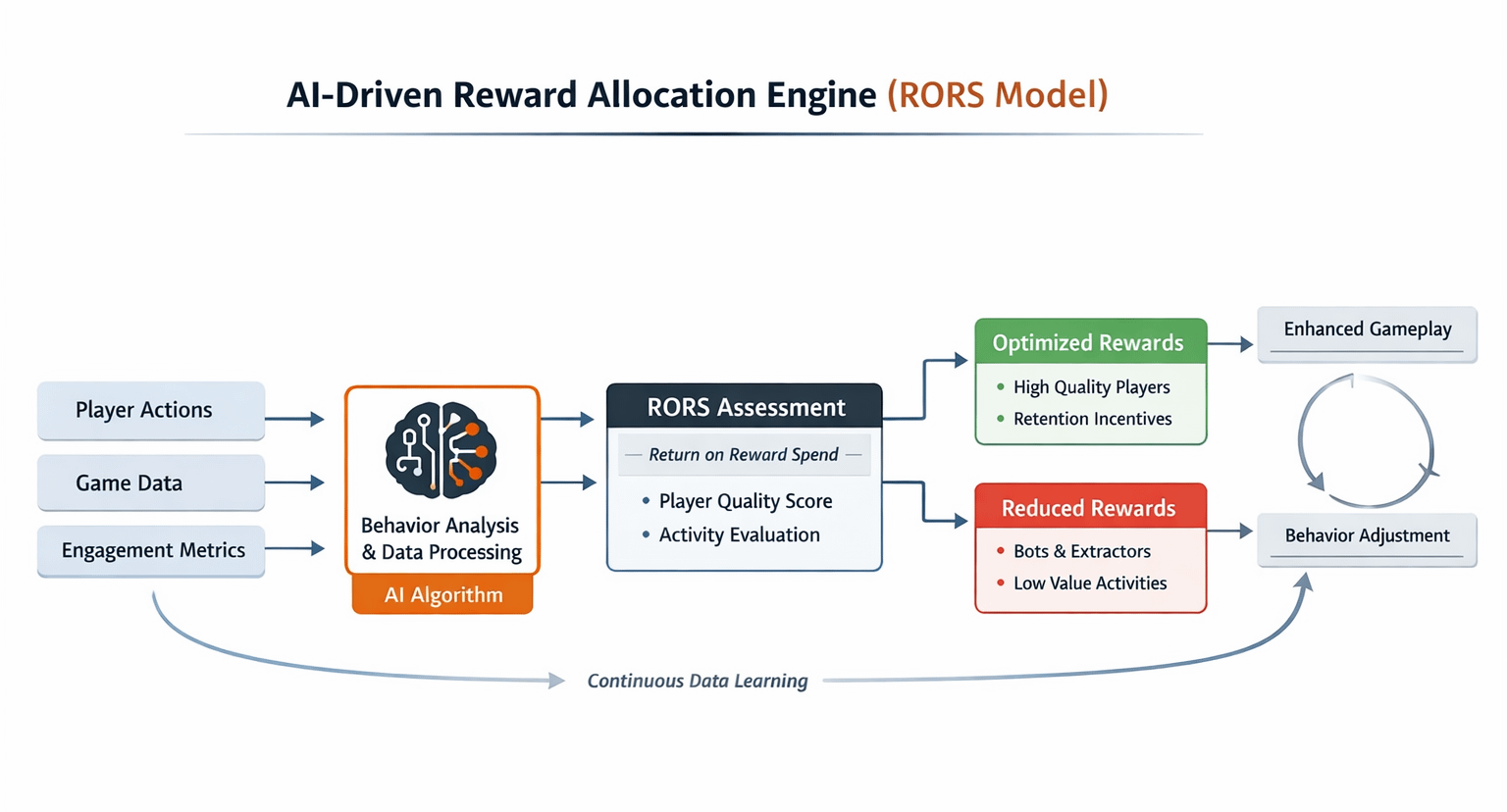

Pixels approaches this differently. It treats rewards less like emissions and more like capital that needs to be allocated with intent. The team calls this RORS, Return on Reward Spend. Not how much you give out, but how effectively those rewards translate into meaningful engagement. It’s a shift from distribution to efficiency.

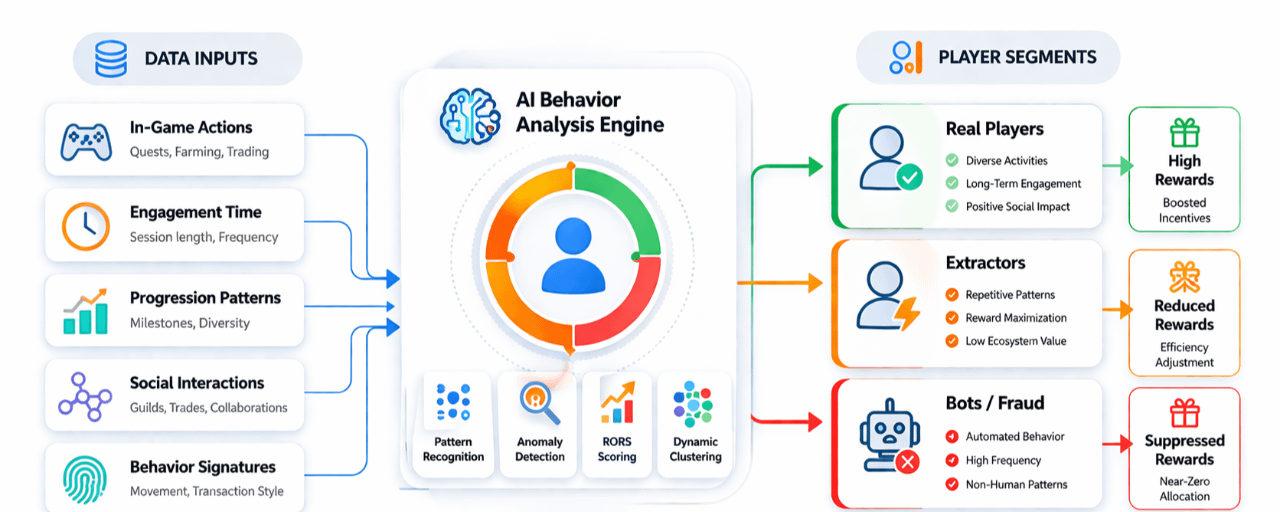

At its core, Pixels is a data driven reward system. Not all players are treated the same—and that’s the point. The system implicitly segments behavior. It learns who is contributing to the ecosystem and who is simply extracting from it, then adjusts rewards accordingly. Over time, that changes what behavior becomes dominant.

That reframes the anti-bot problem entirely. It’s not really about detection in the traditional sense. The system isn’t asking, “Is this a bot?” It’s asking, “Is this behavior worth paying for?” In an adversarial environment where bots constantly evolve, that distinction matters more than identity. The system doesn’t need to perfectly detect bad actors. It needs to consistently make them unprofitable.

You can think of it as a loop. Players generate data. Data feeds reward allocation. Rewards shift toward behaviors that improve retention and depth. Those players stay longer and engage more. That creates better data, which sharpens the system further. It’s a feedback loop designed to reinforce the right kind of participation.

Compare that to the standard GameFi loop. Users come in, farm aggressively, sell rewards, and leave. Price drops, incentives weaken, and the next wave of users has even less reason to stay. It’s a negative loop that compounds quickly. The system doesn’t adapt. It just drains.

What Pixels is building looks more like a live reward engine operating under constant pressure. Not just a game, not just a token, but a system trying to price behavior correctly in real time. And if this expands beyond a single game, the advantage compounds. More games bring more players. More players generate more data. Better data leads to more precise reward allocation. That’s where the publishing flywheel starts to matter.

But none of this works without scale. Early on, data is thin. Signal is noisy. It’s harder to distinguish between genuinely valuable behavior and highly optimized extraction. And if the system gets that wrong too often, it risks reinforcing the very patterns it’s trying to suppress. In that sense, the system isn’t just adaptive, it’s also fragile in its early stages.

There’s also a deeper tension here. If rewards are constantly optimized, players will adapt. They’ll search for new edges, new patterns, new ways to maximize outcomes. The system evolves, but so do the users. It becomes an ongoing negotiation between incentives and behavior, not a fixed design. In an adversarial environment, equilibrium doesn’t really exist.

The token sits right in the middle of this dynamic. $PIXEL can’t just function as emission. If it does, then even a well optimized system eventually feeds into the same pressure, supply expanding faster than demand. Without strong sinks and real in game utility, optimization only delays the outcome. It doesn’t change it.

Which brings everything back to retention. Not short term spikes, not reward bursts, but actual player behavior over time. Do players come back when rewards fluctuate? Do they engage when there’s no obvious optimal path? Because utility only works if someone shows up again tomorrow.

So #pixel doesn’t really look like an anti bot system when you zoom out. It looks like an attempt to allocate capital intelligently in a hostile environment. To reward behavior that compounds. To filter value not by identity, but by contribution.

Concept makes sense.

Execution is hard.

The environment is adversarial.

If behavior holds, everything else follows.